Home / Blog / Artificial Intelligence

Foundational and Advanced Prompt Engineering Techniques for Maximising AI Quality and Efficiency

Artificial intelligence has become a staple for over 1 billion people worldwide. The rapid adoption of artificial intelligence across all walks of life has warranted businesses across industries to incorporate artificial intelligence agents in various capacities to enhance efficiency and improve business experience. A careful observation of AI agent adoption reveals a trend: most people who use AI do not use it to its fullest. As such, it is important to educate users on prompt engineering techniques.

In this article, we discuss foundational and advanced prompt engineering techniques, the aim of which is to equip you with the skills you need to use an Artificial Intelligence agent more strategically.

Whether you are a student, researcher, developer, or professional, we’re going to introduce you to the best prompt engineering techniques used by even the most advanced generative AI development companies to get the most out of your generative AI tools. By the end of this article, you should be able to apply AI techniques to get better results from your AI agent.

What is prompt engineering?

Prompt Engineering is a branch of knowledge that deals with designing, structuring, and refining input that you give to large language models (LLMs) so that they can produce more accurate and context-appropriate outputs. With advancements in generative AI models across the years, they have matured from simple text predictors to sophisticated reasoning machines. As such, it has become even more important for users to learn prompt engineering.

Today, prompt engineering has advanced beyond asking the right questions to understanding how AI models make sense of instructions, manage context, reason, and balance creativity with precision. To put it simply, prompt engineering techniques determine whether your AI agent will behave like an untrained assistant or a partner you can depend on. Effective prompt engineering techniques are the cornerstone of generative AI frameworks.

Types of prompting techniques

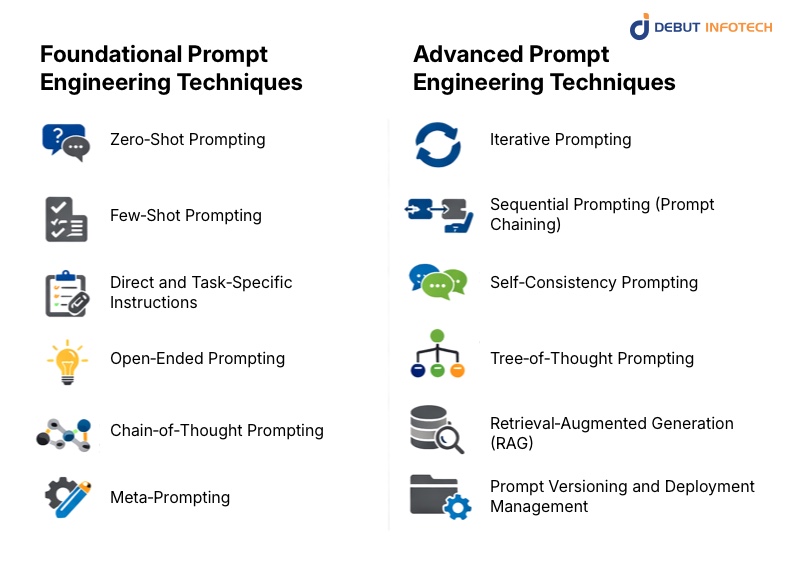

AI prompting techniques can be divided into two types: foundational and advanced techniques

- Foundational Techniques: These are the basic techniques every user should know.

- Advanced Techniques: These techniques build on foundational techniques to address complex reasoning, multi-step workflows, production deployment, and reliability challenges.

Foundational Prompt Engineering Techniques

The following are some of the most important foundational prompt engineering techniques:

1. Zero‑Shot Prompting

This prompting technique is simple. It involves asking a language model to perform a task without providing context, examples, or demonstrations. In Zero-shot prompting, the AI relies on the knowledge and patterns it acquired during training to interpret the prompt and provide the correct response.

Because Zero-shot prompting imitates natural conversations and mirrors how we ask questions or request explanations, it is usually one of the first prompt engineering techniques that artificial intelligence users encounter. Even though it is really basic, zero-shot prompting plays a major role in prompt engineering. It works best when the task is well-defined, widely understood, and does not require specialized formatting or reasoning chains. Examples of tasks well-suited to zero-shot prompting include concept definitions, short text summaries, answering factual questions, and similar tasks. Zero-shot prompting shows glaring inadequacies for more ambiguous, complex, or highly constrained tasks. This is because it doesn’t provide any additional context for the model to work with.

For beginners, understanding zero-shot prompting is an important foundation for evaluating prompt effectiveness. When a zero-shot prompt is not enough, users can then introduce more structure and examples. In this sense, a zero-shot prompt is not just a prompt engineering technique but a point of reference for measuring advanced prompting strategies.

2. Few‑Shot Prompting

In few-shot prompting, users expand on zero-shot prompting by adding a small number of input-output pairs inside the prompt. Adding these example pairs shows the model how the task should be performed, what style is expected, and how the final result should be structured. From these examples, the model can then generalize and produce a context-appropriate response for a new input. This prompting technique leverages the pattern-recognition capabilities of large language models rather than relying solely on abstract instructions.

Few-shot prompting is well-suited for tasks such as translation, sentiment analysis, labelling tasks, and tone-specific writing because they involve formatting rules, stylistic consistency, classification, and transformation. Contrary to zero-shot prompting, which relies solely on instructions, few-shot prompting reduces ambiguity and ensures that the final results align perfectly with the users’ intent.

It is one of the foundational AI prompt engineering techniques because it introduces the idea that large language models learn from demonstrations and not just from direct commands. This represents a paradigm shift for prompt engineers.

3. Direct and Task‑Specific Instructions

This is one of the more rigid AI prompt engineering techniques when compared to the previous two. It involves clearly stating what the model should do while defining strict guidelines for format, length, tone, audience, or perspective. This prompting technique is close-ended because it narrows the scope of acceptable output, reducing interpretive freedom to the barest minimum. One way to think of this prompting technique is as a mini-specification document that outlines the task requirement with precision.

This prompting technique is particularly useful in professional, academic, and business settings where the quality of the final results is judged by predefined criteria. For instance, you could instruct a model to write a 500-word article on the importance of marketing automation for sales conversion, aimed at a non-technical audience, in a neutral tone. This prompt provides a more reliable, context-specific result than simply asking it to explain marketing automation. In this example, the usefulness of the final output depends on how clear the instructions are.

Direct instruction prompting is important because it helps you think deeply about what you want the model to do. It elevates interactions with the model from simple questions to well-thought-out task designs. This technique also reveals that ambiguity can lead to outputs that do not meet your expectations. Therefore, mastering direct, task-specific instructions and prompting techniques helps you control model behavior without resorting to more advanced techniques.

4. Open‑Ended Prompting

Open-ended prompting is the opposite of direct instructions. In this prompting technique, you deliberately leave room for the model to explore, interpret, and provide an expansive response. Unlike direct and specific task prompts, this prompt encourages broad discussion, ideation, and speculative reasoning. This open-ended approach to prompting is well-suited for tasks such as brainstorming, creative writing, philosophical discussions, and exploratory research, where diversity of ideas, not precision, is preferred.

This prompting technique is particularly useful for revealing unexpected connections, new perspectives, and exhaustive overviews. For instance, if you ask a model to “discuss the future of artificial intelligence in society,” you are encouraging it to pull resources from multiple domains such as ethics, economics, technology, and culture. This ability comes in handy during the early stages of thinking or during ideation. But it also has its disadvantages. Open-ended prompting may lead to excessively wordy outputs that may sometimes veer off from the original conversation. Thus leading to a misalignment between results and user expectations.

It is a foundational prompting technique that teaches prompt engineers when not to over-specify. As prompt engineers become more experienced with this prompting technique, they master the delicate balance between creativity and control. This is an essential step to mastering advanced prompt engineering techniques.

Not sure which prompting technique fits your use case?

Our prompt engineering specialists help businesses identify, design, and deploy the right AI strategy for their goals.

5. Chain‑of‑Thought Prompting

In chain-of-thought prompting, you guide the model to an answer using a step-by-step problem-solving approach. In this prompting technique, you teach the model to lay out intermediate reasoning stages in detail. This makes its decision-making process more transparent and structured. You should use this prompting technique for tasks that involve logic, mathematics, multi‑step analysis, or causal reasoning.

The emphasis on layered reasoning in CoT prompting helps the AI model focus on internal reasoning processes rather than jumping to conclusions, leading to more coherent and accurate outputs, especially in tasks that require sequential logic or conditional evaluation. Additionally, this prompting technique makes verification, critique, and refinement of model reasoning much easier.

This technique helps prompt engineers understand that in LLM prompting, the process can be just as important as the result. This understanding helps lay the foundation for more advanced prompt engineering techniques that emphasize structure, transparency, and logical progression.

6. Meta‑Prompting

In this prompting technique, you task the model with reasoning, generating, or improving prompts before executing a task. This prompt asks the model to consider a more effective approach to problem-solving rather than jumping right in. A typical example of this prompting technique is asking an LLM to design an optimal prompt for explaining a concept to a particular audience. After generation, you can use this refined prompt to generate a higher-quality response.

As a foundational technique, meta-prompting teaches prompt engineers to see prompts as objects that can be evaluated and refined. It also encourages engineers to use AI reflectively and reveals that model outputs are only as good as their prompts.

Advanced Prompt Engineering Techniques

7. Iterative Prompting

Iterative prompting is one of the advanced prompt engineering techniques that adopt a cyclical refinement approach rather than a single interaction. With this prompting technique, you take the first result from a prompt and feed it back to the AI model, adjusting instructions based on the previous output. This way, you aren’t looking to get the perfect result from the get-go, but to slowly arrive at the perfect result through incremental adjustments.

Think of this prompt engineering technique as writing a first draft, then revising and refining it over time until it is well-crafted. This prompting technique is perfect for long-form content, research synthesis, strategic planning, and complex explanations.

Because it requires you to carefully assess the quality of an output and understand why it might be insufficient, this prompting method is considered advanced. It also requires patience and intentionality. However, if you use it skillfully, it significantly improves quality and reliability.

8. Sequential Prompting (Prompt Chaining)

Also called prompt chaining, this is one of the advanced prompt engineering techniques that involves breaking tasks into a series of logically ordered prompts. Each smaller prompt executes a defined sub-task, and the result is fed to the model for the next step of the chain. This flexibility reduces the model’s cognitive load and improves coherence across the workflow’s stages.

For example, when executing a research task, you may start by defining key concepts, then summarizing literature, identifying gaps, and finally synthesizing insights. This approach breaks the entire process into modules, giving you more control over the output and a final result that is much clearer and more reliable. This prompting technique is particularly useful for tasks where structure is non-negotiable.

Sequential prompting represents a shift in mindset toward systemic thinking. In sequential prompting, the language model is not simply an all-purpose solution, but a part of a wider reasoning pipeline. Prompt engineers who excel at designing AI workflows are primed to excel at sequential prompting.

9. Self‑Consistency Prompting

This prompting technique involves generating several responses using the same prompt and then selecting the most frequently occurring answers. This approach views differences in response as a feature rather than a flaw, and so it doesn’t rely on a single output. This technique is best suited for tasks that involve uncertainty, probabilistic reasoning, or complex logic. The rationale behind this prompting technique is that different outputs may reveal alternative assumptions or reasoning strategies, and that agreement across these sources indicates higher confidence. As such, comparing the different responses can lead to more reliable conclusions.

Self-consistency acknowledges that LLMs are inherently non-deterministic and provides a way to mitigate this issue. As such it is considered an advanced prompting technique.

10. Tree‑of‑Thought Prompting

This prompting technique is similar to the chain-of-thought; however, it expands on it by exploring multiple reasoning paths simultaneously rather than following a linear sequence. In this prompting technique, you encourage the model to generate multiple solutions or lines of reasoning. Then you ask it to evaluate them and choose or synthesize the most promising outcome.

This technique excels at complex problem-solving, strategic planning, and decision-making tasks involving several viable options.

Tree-of-thought prompting is considered an advanced prompting technique; it requires engineers to carefully design prompts and to allocate greater computational resources. However, its benefits are worth the effort; it offers deeper, more robust reasoning. This technique was designed to imitate our analytical processes, including brainstorming and scenario analysis.

Ready to move beyond basic prompting into production-grade AI workflows?

Debut Infotech builds and maintains advanced LLM pipelines tailored to your business environment.

11. Retrieval‑Augmented Generation (RAG)

Retrieval-augmented generation leverages a combination of prompt engineering advanced techniques and external information retrieval systems to produce more robust responses. In this prompt engineering technique, you don’t simply rely on the model’s internal knowledge; you retrieve relevant documents and databases to augment the prompt before generation. This ensures that the response is up-to-date and domain-specific. RAG is particularly adapted to business, academic, and research environments where accuracy is non-negotiable.

Prompt engineers consider it an advanced technique because it requires integrating prompting logic with information systems. This prompting technique represents a shift from using prompting alone towards an AI-assisted knowledge workflow.

12. Prompt Versioning and Deployment Management

This prompting technique adopts a different approach, viewing prompts as evolving artifacts. As such, prompts require routine systemic testing, documentation, and control. This prompting technique is perfect for production environments where processes are hardly static, and so prompts must reflect this constant evolution. In this prompting technique, you must maintain a prompt history, performance metrics, and rollback mechanisms, as you would in traditional software development.

Conclusion: Unlock new frontiers with Debut Infotech

Large language models are only as good as the prompts you give them.

So far, we have explored different categories of prompt engineering techniques, ranging from AI prompt engineering techniques to foundational prompt engineering techniques and prompt engineering advanced techniques.

Therefore, if you want to explore the strong possibilities of Artificial Intelligence for your business, you need to apply effective prompt engineering techniques for the perfect use cases. And one of the best ways to do this effectively is to partner with a Prompt engineering company like Debut Infotech Pvt Ltd. We offer a comprehensive approach to prompt engineering that encompasses designing, testing, optimizing, and maintaining prompts.

Frequently Asked Questions

A. A good prompt should be clear, provide explicit instructions and an appropriate role assignment, contain a specific output requirement, and include a thoughtful constraint. Additionally, it should have a clear context, as this helps to guide the final response. Understanding these components and applying them will significantly improve the quality and the reliability of a language model’s responses.

A. Zero-shot prompting is a foundational prompting technique that involves asking a language model to execute a task without giving it context, examples or demonstrations. In Zero-shot prompting the language model has to rely on the knowledge and patterns it acquired during training in order to interpret the result and provide the correct response.

A. In few-shot prompting, a model user adds input-output pairs to the prompt to train in the AI model. This way, the prompt engineer demonstrates to the language model how to answer the question by providing examples, rather than relying solely on information from training. This prompting technique leverages the model’s pattern-recognition capabilities.

A. To make your prompts clear, you need to first be clear on what you want; setting a clear objective helps the model provide a more reliable answer. You should also provide examples to help the model recognize patterns and then provide responses that fit your particular scenario. It also helps to provide clear constraints to ensure the model stays within the scope of the query. Contextualizing your prompts in this way can transform the model responses from generic to deep, insightful outputs.

Our Latest Insights