Home / Blog / Artificial Intelligence

AI Agents in Cybersecurity Explained: Capabilities, Limitations, and Future Trends

Cybersecurity is no longer just a way to respond to threats. As attack surfaces grow and attackers use automation more and more, traditional rule-based security technologies are having a hard time keeping up. This has made people more interested in AI agents in cybersecurity. These are systems that can work on their own or with little help to watch, think, and act in complicated security situations.

AI agents work more independently than traditional AI-powered products. This lets them assess signals, make choices, and adjust to new threats. At the same time, there is a lot of hype around the space, with many solutions being called “agents” even though they don’t really give users freedom. This article talks about what AI agents in cybersecurity can achieve right now, where they still have problems, and what the future holds.

Build Intelligent Cybersecurity Solutions with AI Agents

Partner with an experienced AI agent development company to design secure, scalable, and explainable agentic AI systems tailored to your cybersecurity needs.

What Are AI Agents in Cybersecurity?

To understand the value of AI agents in cybersecurity, it’s important to distinguish them from traditional AI tools.

An AI agent is a system that:

- Observes its environment

- Processes information using AI models

- Makes decisions based on goals and constraints

- Takes actions autonomously or semi-autonomously

- Learns from outcomes over time

In cybersecurity, this environment includes networks, endpoints, cloud workloads, applications, logs, and threat intelligence feeds.

What Is Agentic AI in Cybersecurity?

Agentic AI in cybersecurity refers to the use of AI systems designed to act as agents rather than passive analytics engines. These agents don’t just flag anomalies—they reason about them, determine next steps, and execute actions such as:

- Investigating alerts

- Correlating signals across systems

- Triggering containment measures

- Recommending remediation steps

This agent-based approach is a fundamental shift from static detection to adaptive defense.

How AI Agents Differ from Traditional Security AI

Many cybersecurity tools already use machine learning, but that doesn’t automatically make them agentic.

| Aspect | Traditional Security Tools | AI Agents in Cybersecurity |

| Core Function | Rule-based detection and alerting | Autonomous or semi-autonomous reasoning and action |

| Threat Detection | Signature and rule-driven | Behavioral and context-aware |

| Response Model | Manual or scripted | Automated or agent-led with human oversight |

| Adaptability | Limited, requires manual updates | Learns and adapts over time |

| Context Awareness | Low to moderate | High (cross-system correlation) |

| Scalability | Requires additional analysts | Scales with minimal human intervention |

| Role of Humans | Central to all decisions | Supervisory and strategic |

This difference is critical when evaluating claims made by AI agents companies and cybersecurity vendors.

Core Capabilities of AI Agents in Cybersecurity

AI agents bring together multiple AI disciplines—machine learning, large language models, reinforcement learning, and decision systems—into coordinated architectures.

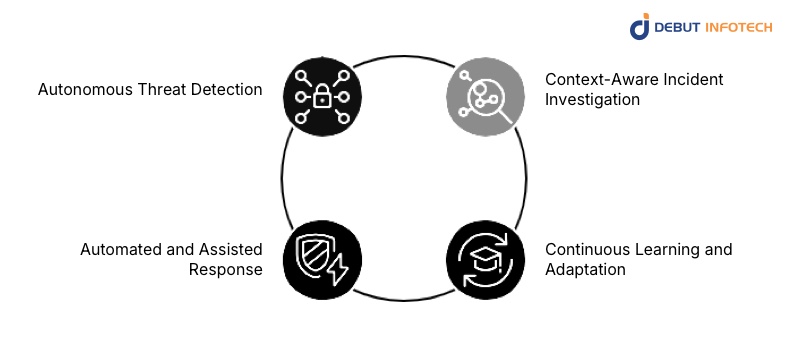

1. Autonomous Threat Detection

One of the most mature applications of AI agents in cybersecurity is threat detection.

Agents can:

- Monitor massive volumes of logs and telemetry

- Identify behavioral anomalies rather than static signatures

- Detect low-and-slow attacks that evade traditional tools

The role of agentic AI in cybersecurity threat detection lies in its ability to connect weak signals across time, systems, and data sources.

2. Context-Aware Incident Investigation

Instead of generating isolated alerts, AI agents can investigate incidents end to end.

They may:

- Trace lateral movement

- Analyze user behavior patterns

- Correlate endpoint, network, and cloud events

- Prioritize incidents based on business impact

This dramatically reduces alert fatigue and shortens investigation timelines.

3. Automated and Assisted Response

AI agents can execute or recommend response actions, including:

- Isolating compromised endpoints

- Revoking credentials

- Blocking malicious IPs or domains

- Escalating incidents to human analysts

Most real-world deployments still keep humans in the loop, but the automation potential is significant.

4. Continuous Learning and Adaptation

Unlike static rule-based systems, AI agents learn from:

- New attack patterns

- Analyst feedback

- Environmental changes

This allows them to adapt as attackers evolve—an essential capability in modern threat landscapes.

Agentic AI Architectures in Cybersecurity

Understanding AI agent architecture is key to evaluating feasibility and maturity.

Common Architectural Components

A typical agentic AI system in cybersecurity includes:

- Perception layer: Collects data from logs, endpoints, APIs, and sensors

- Reasoning layer: Uses AI models and rules to interpret events

- Decision layer: Determines optimal actions

- Action layer: Executes responses via integrations

- Learning loop: Improves performance over time

This modular design allows organizations to deploy Specialized AI Agents for specific tasks rather than one monolithic system.

AI Agents in Cybersecurity Threat Detection

Threat detection is where agentic AI currently delivers the most tangible value.

Behavioral Detection Over Signatures

AI agents excel at identifying:

- Abnormal user behavior

- Suspicious process execution

- Deviations from baseline network activity

This makes them effective against zero-day and novel attack techniques.

Multi-Stage Attack Correlation

Advanced threats rarely occur in a single step. AI agents can:

- Track attack chains across days or weeks

- Connect seemingly unrelated events

- Identify intent rather than isolated actions

This is a major improvement over traditional SIEM-driven detection models.

Agentic AI in Cybersecurity Penetration Testing

Another emerging use case is agentic AI in cybersecurity penetration testing.

Automated Reconnaissance and Exploitation

AI agents can:

- Scan environments for misconfigurations

- Identify exploitable paths

- Simulate attacker behavior

This allows organizations to continuously test defenses rather than relying on periodic manual assessments.

Agentic AI Architectures in Penetration Testing

In advanced setups, multiple agents collaborate:

- One agent performs reconnaissance

- Another attempts exploitation

- A third evaluates defensive responses

This multi-agent approach mirrors real-world attacker strategies and provides deeper security insights.

Real-World Agentic AI in Cybersecurity Examples

While fully autonomous cyber defense systems remain rare, several real-world implementations demonstrate practical value.

Security Operations Centers (SOCs)

AI agents assist analysts by:

- Triaging alerts

- Investigating incidents

- Suggesting remediation steps

This allows SOC teams to scale without proportional increases in headcount.

Cloud and Enterprise Security

In complex cloud environments, AI agents help:

- Monitor dynamic workloads

- Detect misconfigurations

- Enforce security policies in real time

These agentic AI applications in cybersecurity are particularly valuable for large enterprises.

Benefits of Agentic AI Platforms in Cybersecurity

Organizations exploring agentic AI platforms often cite the following benefits:

- Reduced detection and response times

- Lower analyst workload

- Improved threat coverage

- Better scalability

- Enhanced resilience against evolving threats

For businesses working with an AI agent development company, these benefits translate into measurable security ROI when implemented correctly.

The Hype Around AI Agents in Cybersecurity

Despite real progress, the market is flooded with exaggerated claims.

Common Misconceptions

- “Fully autonomous security without humans”

- “One AI agent replaces the entire SOC”

- “Instant immunity to cyberattacks”

In reality, most AI agents are assistive, not fully autonomous, and require careful governance.

Key Limitations of AI Agents (So Far)

AI agents in cybersecurity still face important constraints.

- Data Quality and Bias: Agents are only as good as the data they learn from. Poor telemetry leads to poor decisions.

- Explainability and Trust: Security teams must understand why an agent made a decision—especially in regulated environments.

- Adversarial Manipulation: Attackers can attempt to deceive or poison AI models, making robust design essential.

When Organizations Should Consider AI Agents

AI agents are best suited for:

- Large or complex environments

- High alert volumes

- Limited security staffing

- Cloud-native or hybrid infrastructures

Engaging experienced AI consultants early helps align expectations with real capabilities.

Security, Governance, and Ethical Risks of AI Agents in Cybersecurity

As powerful as AI agents in cybersecurity can be, their deployment introduces new categories of risk that organizations must actively manage.

Autonomous Decision-Making Risks

AI agents can:

- Block users

- Isolate systems

- Trigger containment actions

If these decisions are made without proper safeguards, they may:

- Disrupt business operations

- Lock out legitimate users

- Cause cascading failures

This is why most production systems still rely on human-in-the-loop or human-on-the-loop models rather than full autonomy.

Explainability and Auditability

One of the biggest challenges with agentic AI is explainability.

Security teams, auditors, and regulators need to know:

- Why an agent flagged an event

- Why it chose a specific response

- What data influenced its decision

Lack of transparency can limit adoption, especially in regulated industries such as finance, healthcare, and critical infrastructure.

Adversarial Attacks Against AI Agents

AI agents themselves can become targets.

Potential attack vectors include:

- Data poisoning

- Prompt manipulation (for LLM-based agents)

- Model evasion techniques

This makes AI agents security a growing discipline of its own, requiring hardened architectures and continuous monitoring.

Human-in-the-Loop vs Fully Autonomous AI Agents

A critical design decision in agentic AI is the level of autonomy granted.

Human-in-the-Loop Models

In this setup:

- AI agents investigate and recommend actions

- Human analysts approve or reject responses

This model is currently the most common and trusted in enterprise cybersecurity.

Human-on-the-Loop Models

Here:

- AI agents act autonomously within predefined limits

- Humans monitor outcomes and intervene when needed

This strikes a balance between speed and control.

Fully Autonomous Models (Still Experimental)

Fully autonomous AI agents that operate without human oversight remain largely experimental and are typically confined to:

- Narrow use cases

- Sandboxed environments

Most organizations are not ready for full autonomy—and vendors claiming otherwise should be evaluated carefully.

Cost, Deployment, and ROI Considerations

Adopting AI agents in cybersecurity is not just a technical decision—it’s a business one.

AI Agent Development Cost

The AI agent development cost depends on factors such as:

- Scope of autonomy

- Number of integrated systems

- Complexity of AI models

- Custom vs off-the-shelf solutions

- Ongoing training and maintenance

Organizations working with an experienced AI agent development company can significantly reduce trial-and-error costs.

Build vs Buy vs Hybrid

Companies typically choose between:

- Buying agentic capabilities from vendors

- Building custom agents in-house

- Adopting a hybrid approach

For most enterprises, hybrid models—combining commercial platforms with custom agents—offer the best balance.

Measuring ROI

ROI from AI agents often appears as:

- Reduced mean time to detect (MTTD)

- Reduced mean time to respond (MTTR)

- Lower analyst burnout

- Improved security coverage without headcount growth

These metrics matter more than raw automation percentages.

Read this blog: AI Agents Development Cost

How to Choose AI Agents Companies and Vendors

Not all vendors offering “AI agents” deliver true agentic capabilities.

What to Look For

When evaluating AI Agents Companies, organizations should assess:

- Clear agent architecture (not just ML models)

- Proven production deployments

- Explainability and audit controls

- Security of the AI system itself

- Integration with existing security tools

Vendors that support AI Agents for developerswith APIs and customization options tend to offer greater long-term flexibility.

Avoiding Vendor Hype

Red flags include:

- Claims of fully autonomous security with no oversight

- Lack of technical transparency

- Overreliance on buzzwords without architectural clarity

Experienced AI consultants can help organizations separate real capability from marketing.

Agentic AI and the Broader AI Security Ecosystem

AI agents do not operate in isolation—they are part of a broader ecosystem of AI tools, platforms, and workflows.

Integration with Existing Security Stack

Successful deployments integrate with:

- SIEM and SOAR platforms

- Endpoint detection and response (EDR)

- Cloud security tools

- Identity and access management

This integration allows agents to act contextually rather than in silos.

Role of AI Models and Algorithms

Agentic systems rely on multiple AI models and AI algorithms, including:

- Anomaly detection models

- Classification models

- Large language models for reasoning

- Reinforcement learning for optimization

Understanding how these components interact is essential for secure deployment.

Future Trends in AI Agents in Cybersecurity

Looking ahead, several trends will shape the next generation of agentic AI.

Multi-Agent Collaboration

Instead of single agents, future systems will use:

- Coordinated teams of specialized agents

- Task-specific collaboration

- Distributed decision-making

This mirrors how human security teams operate.

Agentic AI and Zero Trust

AI agents will increasingly support zero-trust architectures by:

- Continuously validating trust

- Monitoring identity behavior

- Enforcing adaptive access controls

Self-Healing Security Systems

Long-term, AI agents may enable:

- Automatic configuration repair

- Dynamic policy adjustment

- Resilience-driven security architectures

This represents a shift from reactive defense to adaptive resilience.

Regulation and Standardization

As adoption grows, expect:

- Industry standards for agentic AI

- Regulatory guidance on autonomous security systems

- Stronger governance requirements

Organizations that prepare early will adapt more smoothly.

What Organizations Should Do Next

For leaders evaluating AI agents in cybersecurity, the next steps should be pragmatic and deliberate. Organizations should start small by deploying agents for narrow, high-impact use cases such as alert triage or investigation assistance, while maintaining human oversight to avoid rushing into full autonomy without proper governance and trust frameworks.

Rather than chasing hype, the focus should be on sound agent architecture, high-quality data, and seamless integration with existing security systems. Working with an experienced AI agent development company or AI development services provider can further reduce implementation risk and accelerate real, measurable value.

Consult Experts on Agentic AI for Cyber Defense

Work with AI consultants to evaluate use cases, architecture, and deployment strategies for AI agents in cybersecurity—without falling for the hype.

Conclusion

AI agents in cybersecurity represent a meaningful evolution in how organizations defend digital assets. When implemented thoughtfully, they enhance detection, accelerate response, and help security teams operate at scale. However, they are not a silver bullet.

The real value of agentic AI lies not in replacing humans, but in augmenting them—handling complexity, reducing noise, and enabling faster, more informed decisions. Organizations that approach AI agents with clarity, governance, and realistic expectations will be best positioned to benefit as this technology matures.

As agentic AI continues to evolve, it will become a defining pillar of modern cybersecurity strategy—one that blends intelligence, autonomy, and human judgment into a more resilient defense posture.

Frequently Asked Questions (FAQs)

A. AI agents in cybersecurity are autonomous or semi-autonomous systems that can observe security environments, analyze threats, make decisions, and take or recommend actions. Unlike traditional tools, they adapt continuously to evolving attack patterns.

A. Agentic AI in cybersecurity refers to AI systems designed to act as agents rather than passive analytics tools. These systems combine perception, reasoning, decision-making, and action to assist or automate security operations.

A. AI agents improve threat detection by analyzing behavioral patterns, correlating signals across systems, and identifying anomalies that signature-based tools often miss. This makes them effective against advanced and zero-day threats.

A. Most AI agents in cybersecurity today are not fully autonomous. They typically operate in human-in-the-loop or human-on-the-loop models to ensure oversight, reduce risk, and meet compliance requirements.

A. Key benefits include faster threat detection and response, reduced alert fatigue, improved scalability of security operations, and better adaptation to new and emerging threats.

A. Limitations include dependence on data quality, challenges with explainability, vulnerability to adversarial attacks, and the need for strong governance. These factors mean AI agents should augment—not replace—human security teams.

A. Organizations should begin with focused use cases such as alert triage or incident investigation, maintain human oversight, and work with experienced AI development services providers to design secure and scalable agent architectures.

Our Latest Insights