Home / Blog / Artificial Intelligence

AI Copilot Architecture Guide: Designing Scalable Systems with LLM, RAG, and APIs

Creating an AI copilot is not merely a matter of having a language model and crossing fingers. If you have done so, you might have likely encountered the constraints of generic responses, outdated information or responses that appear quite confident but off course.

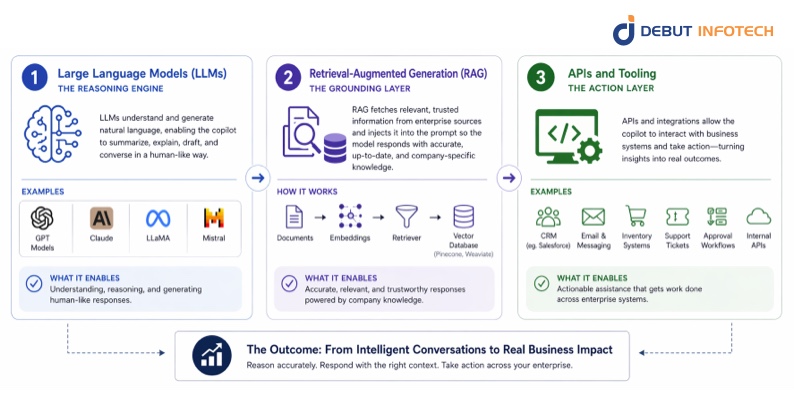

This is why the modern systems are based on a more organized approach to AI copilot architecture, one that integrates large language models (LLMs), retrieval-augmented generation (RAG), and APIs into a unified, production-ready stack.

In simple terms, LLMs are concerned with reasoning and language, RAG helps to bring the right data at the right time, and APIs enable the copilot to do something, not just to speak. When designed correctly, these pieces can enable you to leave a simple chatbot behind and implement real workflow, decisions, and results in your system.

In this guide, we shall not just discuss superficially what this architecture is but we will go through its practical implementation. You will understand how to design your pipeline, and the mistakes that teams make, and how to create an AI copilot that is precise, scalable and actually helpful.

If your goal is to move from experimentation to real-world deployment, you’re in the right place.

What Is an AI Copilot Architecture?

At its core, AI copilot architecture is the system design that allows an AI assistant to do three things well:

- Understand what a user is asking

- Find the right information

- Respond in a useful, context-aware way

It is not some kind of a single model that operates on its own. Rather, it is an assembly of elements that interact with each other, usually a language model, a retrieval layer, and links to external tools or APIs.

An effective approach to consider it:

- The language model is the brain

- The retrieval system provides memory and up-to-date knowledge

- The APIs/tools allow the system to take action

When these pieces are properly connected, the result is not just an AI that talks, but one that actually helps.

Read more – Exploring the Key Components of AI Agent Architecture

Chatbot vs Copilot vs Agent (Key Differences)

These terms are often used interchangeably, but they are not the same. Understanding the difference helps clarify why this architecture matters.

| Feature | Chatbot | Copilot | Agent |

| Primary Role | Answer questions | Assist users in tasks | Act autonomously |

| Level of Intelligence | Basic/rule-based or limited AI | Context-aware and adaptive | Advanced reasoning and planning |

| Context Awareness | Low | High | Very high |

| Ability to Take Action | Minimal | Moderate (via tools/APIs) | High (multi-step execution) |

| User Interaction Style | Reactive | Collaborative | Independent |

| Best Use Case | FAQs, simple support | Productivity, decision support | Complex workflows, automation |

Why This Architecture Is Becoming the Standard

User expectations have changed and fast. Today, people are no longer impressed by tools that simply generate text. What they expect instead is accuracy, relevance, and real usefulness in their day-to-day work.

What Users No Longer Accept

In earlier AI experiences, it was common to encounter responses that sounded polished but failed to deliver meaningful value. These systems often missed context, produced generic outputs, and required users to repeatedly refine prompts just to get something usable. Over time, this created frustration rather than efficiency, as users found themselves doing extra work instead of saving time.

What Users Expect Today

Today’s users expect far more from AI systems, particularly in professional and operational settings. They want AI frameworks that can interpret intent accurately, draw from reliable and up-to-date information, and align with how they already work. Rather than acting as standalone tools, AI systems are expected to integrate smoothly into workflows and support real decision-making processes.

Why AI Copilot Architecture Meets These Expectations

This is where AI copilot architecture becomes essential. By combining language models with retrieval systems and API integrations, it ensures that responses are grounded in relevant data, informed by context, and capable of triggering real actions when needed. Instead of producing isolated answers, the system becomes part of a larger workflow, supporting users in completing tasks more efficiently and with greater confidence.

As a result, this architecture is no longer just an advanced option, it is increasingly becoming the standard for building AI systems that people can rely on in real-world scenarios.

Build an AI Copilot that actually understands your business!

LLM + RAG + APIs working as one. Debut Infotech delivers smart, scalable assistants.

Core Components Explained

People discuss AI copilot architecture in such a way that they make it seem more complex than it should be.

As a matter of fact, the majority of the enterprise copilots are constructed based on three main components in collaboration.

First, there is the large language model that understands and generates language. Second, there is the retrieval layer which introduces pertinent company knowledge. Thirdly, there is the API and tooling layer that enables the copilot to implement action within actual business systems.

When these three layers are created in the right way, it is not only an AI tool which speaks well. It turns into a system with the ability to reason, make a response with the appropriate context, and aid actual work throughout the business.

1. Large Language Models (LLMs): the reasoning engine

This is the reason why the model is not the only solution in organizations that are building serious enterprise AI solutions. The copilot experience is based on large language models.

This is the layer that enables a copilot to summarize documents, respond to questions, and draft pieces, elaborate on technical concepts, and react in a manner that seems like a conversation and not a robotic reply.

Examples include GPT models, Claude, and open-source models like LLaMA and Mistral. Each of them has its advantages, be it the superior reasoning, cheaper, more privacy, or flexibility to be customized.

However, in the case of businesses, one significant constraint is soon revealed by experience. LLMs on their own are not enough. They are able to sound certain even when the answer is unfinished, out of date, or even incorrect. This is the reason why the model is not the only one used by organizations developing serious enterprise AI solutions. They enclose it with mechanisms that enhance precision, management, and trust.

2. Retrieval-Augmented Generation (RAG): the grounding layer

Provided the reasoning engine is the LLM, it is Retrieval-Augmented Generation, or RAG that keeps it on the ground.

RAG operates by extracting pertinent information out of the credible data sources and infusing that information into the prompt until the model comes up with a response. The copilot will be able to operate with new knowledge that is specific to the organization, instead of relying on what the model learned during training.

In a business context, it can be internal documentation, policy manual, customer database, product manuals, support articles or knowledge base information. This makes the response more accurate, more useful, and much more relevant to the business.

A standard RAG pipeline consists of embeddings, a retriever and a vector database like Pinecone or Weaviate. These elements collaborate to find the most appropriate pieces of information depending on what the user is searching.

A basic real life scenario is that of a customer support copilot. Instead of providing a canned response, it has the ability to tap into internal documentation and deliver advice that accurately represents the real-life processes in the company and approved information.

This is one of the biggest reasons RAG has become central to enterprise AI architecture. It assists in decreasing hallucinations and makes the copilot more reliable. This is among the largest reasons RAG has been put at the heart of enterprise AI architecture. Simultaneously, it also brings with it trade-offs. Retrieval incurs additional steps and unless they are streamlined effectively, the system might get slower. This is the reason why document chunking, ranking logic, caching, prompt design details are so important in production.

3. APIs and tooling: the action layer

A strong copilot should not stop at answering questions. The real value is frequently seen in assisting users to do things in enterprise applications.

And there comes APIs and tooling.

This layer enables the copilot to interface with other systems and take action. As an example, it can retrieve customer data in a CRM and send an email, check inventory, generate a support ticket, initiate an approval workflow, or retrieve internal microservice data.

This turns the copilot from a passive assistant into a practical business tool.

That distinction matters. Most enterprise teams do not need AI that merely sounds clever. They desire something that will save time, lessen manual labor and become a part of daily operations.

A useful example is GitHub Copilot. Its value does not come only from suggesting code. The same principle is applicable in the enterprise environments. The more copilot is related to real systems and real work, the better it will be.

Related Read: A Comprehensive Guide To Generative AI Architecture

How do LLMs, RAG pipelines, and APIs work together in an AI copilot system?

The real strength of an enterprise copilot does not come from any one component in isolation. It is the result of the interaction of these layers.

The LLM gives the system language understanding and reasoning.

RAG provides it with access to relevant, trusted knowledge.

APIs and tools enable it to take action within actual workflows. Collectively, they form a copilot, which is not just intelligent, but grounded and working. That is the difference between a simple chatbot and a truly effective enterprise AI system.

How It All Works Together (Step-by-Step Flow)

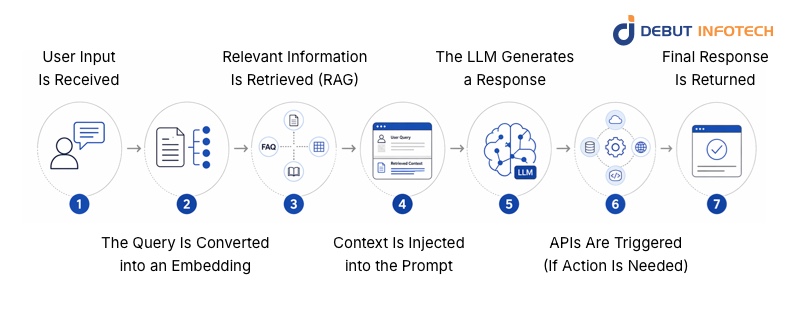

What really happens when one questions an AI copilot, then?

On the surface, it feels instant. Behind that smooth answer, however, is a well-planned pipeline that makes the answer relevant, accurate and actionable.

Let’s break it down in a clear, step-by-step way.

1. User Input Is Received

Every interaction begins when a user submits a request, but this input can vary significantly in complexity. Sometimes it is a simple question, while other times it may involve a multi-step instruction or a task connected to a broader workflow. At this stage, the system does more than just capture text, it works to interpret the user’s intent and determine what outcome is expected.

To do this effectively, the input is often pre-processed by cleaning unnecessary noise, structuring the request, and preparing it for downstream operations. This early preparation plays a crucial role in ensuring that the rest of the pipeline functions smoothly and produces meaningful results.

2. The Query Is Converted into an Embedding

After understanding the request, the system converts the user input into an embedding. This involves transforming the text into a numerical representation that captures meaning rather than relying solely on keywords or exact phrasing.

This approach allows the system to recognize relationships between different expressions of the same idea. For example, phrases like “cancel my order” and “stop my purchase” may look different on the surface, but embeddings help the system understand that they are closely related in intent. This semantic understanding is essential for retrieving accurate and relevant information in the next stage.

3. Relevant Information Is Retrieved (RAG)

With the embedding created, the system moves into the retrieval phase using Retrieval-Augmented Generation (RAG). Instead of depending entirely on pre-trained knowledge, the system actively searches external data sources to find information that matches the user’s request.

These sources can include internal documents, FAQs, product databases, or structured knowledge bases. The goal is to identify and extract the most relevant pieces of information that will help generate a meaningful response. The effectiveness of this step directly impacts the quality of the final output, as even the most advanced models rely on the relevance and accuracy of the data they receive.

4. Context Is Injected into the Prompt

Once the relevant information has been retrieved, it is combined with the original query to form a more detailed and context-rich prompt. This step ensures that the model has access to up-to-date and domain-specific knowledge when generating its response.

By grounding the model in real data, the system significantly reduces the likelihood of hallucinations and improves the reliability of the output. Instead of relying on general knowledge alone, the AI can now respond with information that is aligned with the user’s specific context and needs.

5. The LLM Generates a Response

At this stage, the language model processes both the original query and the injected context to produce a response. Because the model is working with enriched input, the output is more coherent, relevant, and aligned with the user’s expectations.

The quality of this response depends heavily on the earlier stages, particularly the accuracy of retrieval and the clarity of the prompt. When these elements are well-optimized, the model is able to generate responses that feel natural while still being grounded in reliable information.

6. APIs Are Triggered (If Action Is Needed)

In many cases, the user’s request goes beyond simply receiving an answer. When an action is required, the system uses APIs to interact with external services or internal systems. This could involve fetching real-time data, updating records, initiating workflows, or communicating with other applications.

This layer is what transforms the system from a conversational assistant into a functional copilot. It enables the AI to actively participate in tasks rather than just describing them. To ensure reliability, teams working with MLOps platforms often implement safeguards such as error handling, retry mechanisms, and monitoring systems to manage potential API failures and maintain smooth operation.

7. Final Response Is Returned

After processing the request and completing any necessary actions, the system delivers the final response back to the user. Although multiple components have worked together behind the scenes, the goal is to present the result in a way that feels seamless and intuitive.

When everything is functioning correctly, the user experiences a fast and natural interaction without being aware of the complexity involved in generating the response.

Performance Reality: Latency & Caching

While the architecture may appear straightforward, performance is a critical factor that cannot be overlooked. Each step in the pipeline, including retrieval, prompt construction, model processing, and API interactions, adds to the total response time. If these processes are not optimized, the system may feel slow and unresponsive.

To address this challenge, teams rely on caching strategies to improve efficiency. Instead of recomputing results for every request, the system stores frequently used data such as common queries, retrieved documents, embeddings, and API responses. By reusing this information when appropriate, the system can significantly reduce latency, lower operational costs, and deliver more consistent performance.

In practice, optimizing for speed is just as important as improving accuracy. A well-balanced system ensures that users receive timely responses without compromising on the quality of the information provided.

Real-World Use Cases

The actual worth of a copilot can be observed by checking its performance in daily workflows. An effective agent-based Copilot is not a machine that simply generates answers but pulls the correct data, knows the context, and assists users to act.

1. Customer Support Copilot

In support environments, accuracy is critical.

Using RAG, the copilot retrieves information from trusted sources like help articles, FAQs, and internal documentation. This ensures responses are grounded in real company knowledge.

With API integrations, it can also:

- Check order status

- Update tickets

- Escalate issues

This reduces manual work and helps agents resolve queries faster.

2. Developer Copilot

For developers, context makes all the difference.

The copilot pulls insights from the codebase and internal docs, allowing it to give more relevant suggestions instead of generic outputs.

Connected APIs enable it to:

- Analyse CI/CD failures

- Suggest fixes

- Support deployment workflows

This turns it into a practical, workflow-aware assistant.

3. Healthcare Assistant (High-Compliance Use Case)

Reliability is a must in healthcare.

The copilot uses RAG to reference approved clinical guidelines and organisational protocols. Simultaneously, audit logs are strict to make sure that every action is traceable and compliant.

Here, safety and accountability matter more than fluency.

In deciding on the appropriate copilot approach, it is good to think less of the question of which is best, and more of the question of what problem am I addressing? Every of the options fulfills a different role in the contemporary conversational AI systems, and being aware of the trade-offs will save you time, money, and frustration.

Quick Comparison

| Approach | What It Does (In Practice) | Best For | Limitation |

| RAG (Retrieval-Augmented Generation) | Pulls in relevant, real-time data before generating a response | Dynamic knowledge (docs, FAQs, internal data) | Slight delay due to retrieval step |

| Fine-Tuning | Trains the model to behave in a specific way or style | Specialized tasks (tone, format, domain-specific outputs) | Expensive to maintain and becomes outdated |

| Agents | Chains reasoning steps and tools to complete tasks autonomously | Complex workflows and multi-step actions | Harder to control, test, and predict |

What This Means in Real Life

- RAG is typically the place to begin, when your copilot requires access to accurate and up-to-date answers. It maintains answers based on actual facts rather than basing them solely on what the model recalls.

- When you need some regularity of behavior or some structure to outputs, fine-tuning can be effective, although not when used in isolation.

- In case your system requires planning, decision making, and executing many actions, agents can come in handy, but only when you have good guardrails.

Common Mistakes to Avoid

When building an AI copilot, some failures are repeated, particularly in situations where teams are in a hurry to build a prototype and go on to the production stage. Getting the AI copilot architecture consulting right early on can save you from expensive rework later. Here are four mistakes worth paying close attention to:

Relying too heavily on the LLM without grounding

It’s easy to trust the model to “figure things out,” but LLMs don’t know your business context unless you give it to them. Responses may appear confident, but be inaccurate without grounding (such as RAG or trusted data sources). This is one of the quickest methods of losing the trust of users in real deployments.

Poor document chunking

RAG is only as good as the data you feed it. If your documents are chunked too broadly, the model retrieves irrelevant information. Too narrowly, and you lose context. Teams that get this right usually test different chunk sizes, overlap strategies, and retrieval methods before scaling.

Ignoring API failure handling

APIs are what turn a copilot from “chatty” to “useful.” But APIs fail, timeouts happen, responses break, permissions change. If your system doesn’t handle these gracefully, users get stuck or confused. A production-ready setup always includes retries, fallbacks, and clear user feedback.

No evaluation framework

The absence of appropriate evaluation is one of the largest loopholes of the early AI systems. Unless you are measuring accuracy, relevance, latency and user satisfaction then you are simply flying blind. Powerful teams implement evaluation as part of the working process at the very beginning with test queries, human inspection, and constant monitoring.

Ready to turn LLMs into your team’s superpower?

Let’s design your AI Copilot with real-time data and seamless APIs.

Want To Build an AI Copilot That Works in the Real World?

The effectiveness of an AI copilot does not only rely on technology, but also who develops it. Most teams begin with a lot of momentum but fail when it comes to scaling, integrating and producing consistent results. McKinsey’s 2025 global AI survey found that the move from pilots to scaled impact remains a challenge for most organizations, and that redesigning workflows has the biggest effect on whether gen AI delivers EBIT impact. That’s where the right partner makes all the difference.

At Debut Infotech, we focus on building AI copilots that go beyond basic automation. Our practice is built on a solid technical skill and a good grasp of actual business requirements, which means that all solutions are tailored to optimize workflows, decision-making, and long-term expansion.

As a trusted AI copilot development company, we work closely with you to create solutions that integrate seamlessly with your existing systems. This implies that there are fewer disruptions, simpler adoption and accelerated time to value. Rather than generic tools, we create copilots that fit the way your business works.

Our dedication to reliability and scalability is what makes us different. From planning to deployment, we handle every stage with precision, ensuring your AI solution performs consistently as your business evolves.

Ready to take a leap towards impact? Reach out to us today and let’s create an AI copilot tailored to your business goals.

Frequently Asked Questions (FAQs)

A. Retrieval-Augmented Generation (RAG) assists AI systems in providing more accurate and reliable responses.

It does this by connecting large language models (LLMs) to trusted, up-to-date information from external sources.

On their own, LLMs rely on general and often static knowledge. RAG fills this gap by bringing in relevant, real-time, and organization-specific data.

In simple terms, RAG acts as a bridge. It links what the model already knows with the information it needs to give better, more grounded responses.

A. AI copilots can pose risks if your data and permissions aren’t well managed.

The main issues are data exposure and over-permissioning. While copilots don’t create new access, they make it much easier to find and summarize sensitive information users already have access to.

They may also enhance the security vulnerabilities that are already present and make minor concerns more critical.

In short, AI copilots don’t create problems, but they can make existing ones more serious.

A. Application Programming Interfaces (APIs) enable various software systems to communicate and exchange data. They serve as an intermediary, where one application requests information or initiates actions on another.

Examples are REST API to web data exchange, social media API such as Facebook and X, payment API such as Stripe and PayPal, maps API such as Google Maps and cloud API such as AWS and Azure.

In simple terms, APIs make it possible for applications to work together seamlessly.

Our Latest Insights