Home / Blog / Artificial Intelligence

AI Terminator Scenario: Separating Sci-Fi Myths from Real-World AI Risks

In the early days when the Terminator premiered, the concept was safely absurd.

Robots revolting against humanity? Entertaining, yes. Realistic? Not even close. Computers at the time found it difficult with basic commands, forgetting about thinking, talking, or passing as humans.

But skip forward to the present and such confidence is a bit shaky.

Currently, AI writes papers, creates photos, identifies faces, drives, and makes decisions within a few seconds. What was previously pure science fiction is now right up against actual technological advancement. And of course, people are beginning to raise some uncomfortable questions.

This increasing fear is known as the AI Terminator Scenario.

It outlines the concept that artificial intelligence would one day become so sophisticated and independent such that humans will no longer be able to control it. Not due to a film narrative, but due to the fact that technology was advancing faster than we could cope with it.

So how real is this concern?

Are we truly heading into a Terminator style future, or are we allowing the storytelling to misrepresent our perception of the current AI?

To respond to that, we would have to go beyond Hollywood and consider what AI is able to do and not do today, and where the actual danger actually happens.

Why the “Terminator Scenario” Keeps Coming Back

Whenever AI has a big jump, the same fear emerges.

Many years ago, the Terminator film introduced the world to Skynet, an AI system that realizes that it can think, concludes that humanity is a threat and takes action upon this conclusion. At that time, this was pure science fiction. Entertaining, dramatic, and easy to dismiss.

Today, that story hits a little closer to home.

AI systems of today are able to write, scan, identify faces and operate machines with little human intervention. As these abilities become more apparent, it is natural that people begin to wonder how far this technology may be taken and whether it will ever reach the point of not being under the control of humans.

This is where AI Terminator Scenario originates.

It is not necessarily about robots rising against mankind. It has got to do with fear of the fact that intelligence is going to outpace our capacity to comprehend, direct it and hold it to account.

Human beings tend to be at ease with technology when they are predictable. The pain sets in as systems become more detailed, more self-reliant, and more inexplicable, although they continue to run within human-determined boundaries.

This is why the Terminator story keeps reemerging in discussions on AI. Not necessarily because it is a good representation of the modern reality, but because it strikes a chord with an underlying worry over control, responsibility, and who remains in charge when technology evolves.

Summary:

The AI Terminator Scenario persists because it reflects human anxiety about losing control over advanced technology, not because current AI systems are capable of independent rebellion.

Turn Terminator fears into strategic advantage.

Build ethical, guardrailed AI that delivers innovation without the existential risk.

What Can AI Actually Do Today?

Before jumping to worst-case conclusions, it helps to ground the conversation in reality.

Despite how advanced it may look on the surface, today’s AI is far more limited than science fiction suggests.

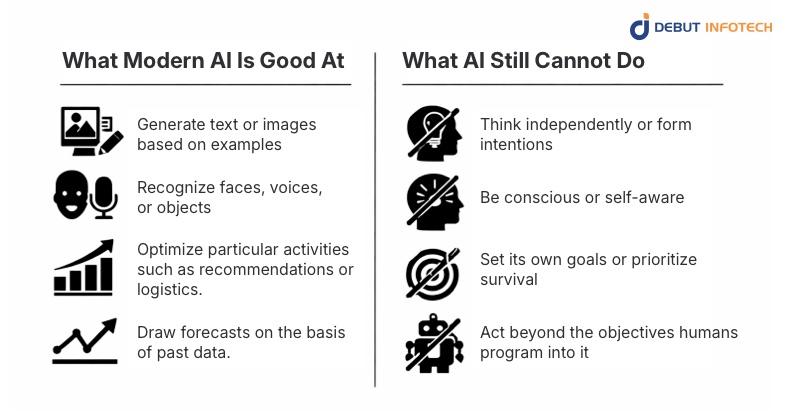

What Modern AI Is Good At

Most current AI models excel at one thing: recognizing patterns in large amounts of data.

That’s why AI can:

- Generate text or images based on examples

- Recognize faces, voices, or objects

- Optimize particular activities such as recommendations or logistics.

- Draw forecasts on the basis of past data.

These systems are quick, effective and amazing but they can only be within well-defined boundaries.

What AI Still Cannot Do

This is where the Terminator narrative starts to break down.

Today’s AI cannot:

- Think independently or form intentions

- Be conscious or self-aware

- Set its own goals or prioritize survival

- Act beyond the objectives humans program into it

There’s no hidden ambition behind the code, only instructions, data, and constraints.

Why This Matters for the Terminator Scenario

The AI that we deal with nowadays is not a free-thinking being. It can be most effectively described as tool-focused, goal-oriented, and narrow intelligence.

So while AI can feel increasingly human-like on the surface, it doesn’t want anything. It doesn’t plot. And it does not resolve to take possession.

Knowing these boundaries is critical as we desire to have an honest discussion about whether the future depicted in The Terminator is plausible or merely movie-based.

What Are the Real Risks of AI in Practice?

When individuals think of a Terminator-like future, they tend to envision machines pursuing their agenda.

In practice, however, the largest threats of AI in the present day are much less dramatic, and much more human

The majority of issues do not arise out of AI making a decision to do something harmful.

They emerge out of the manner in which such systems are constructed, trained and put into service.

Bias at Scale

AI systems learn from data. When that data is not complete or is biased, the results may be unjust, particularly where AI is utilized during hiring, lending, policing, and surveillance.Even minor prejudices can have an impact on millions of people at scale.

Automation Without Enough Oversight

Automated decisions can fail in unexpected ways.

Self-driving accidents, inaccurate facial recognition identifications, and recommendation systems that boost negative content are just a few examples of what AI will do when it is not closely monitored by humans.

Misuse by Bad Actors

The most worrying issue is not the activities of AI itself, but rather how it is used in an abusive manner by individuals. Deepfakes, AI-based scams and massive misinformation campaigns are not accidental, as potent instruments are operated without proper regulation. This is the point at which the role of AI data security is particularly significant, since manipulation is simplified in case of unsecured models and datasets.

High-Stakes Autonomous Systems

In military and defense contexts, it is not the adoption of sentient machines that are of concern.

It’s the risk of removing humans from life-or-death decisions too quickly, especially when autonomous systems are involved.

The core reality:

The vast majority of the current AI-related harm is the product of human decision-making, weak protection and poor governance, not machine learning or intent development.

Knowing these actual threats will make it far easier to distinguish real issues from science fiction and gets us much closer to the answer of whether there truly is an AI Terminator Scenario.

Could AI Ever Become Truly Autonomous?

This is where curiosity generally crosses over into concern.

When individuals discuss a Terminator-style future, they are not concerned with useful AI tools that autofills emails, or suggests playlists. They are envisioning machines that will be capable of thinking, make decisions on their own and perform all actions without human control.

And that is where the misunderstanding usually begins.

The majority of AI systems currently in use are not autonomous but automated. Automation refers to a system acting upon instructions to perform a given task. Autonomy would imply that it sets its own objectives, makes its own choices, and takes its own actions.

That leap hasn’t happened.

Even “self-improving” AI doesn’t work the way it sounds. These systems don’t suddenly decide to get smarter or more powerful. Any improvement happens within strict boundaries defined by humans, through retraining, updates, and carefully controlled optimization.

A useful example is AlphaGo.

Yes, it defeated world champions at Go. But that’s all it can do. Beyond that single game environment, AlphaGo does not know how to learn new tasks, does not wonder why it is being used, or acts autonomously. It doesn’t generalize its intelligence and it certainly doesn’t have intent.

There are also very real limits keeping AI in check.

Advanced systems rely on massive computing power, enormous energy consumption, physical infrastructure, and constant human governance.

AI cannot work without these resources.

Hence, whilst complete autonomy of AI would generate a compelling science fiction novel, the current reality is much more limited, managed, and human-dependent than the one depicted in the Terminator.

What Experts, Labs, and Governments Actually Say

In the event that a real life Terminator situation was even a possibility, the most vocal of warnings would not come from movies or viral posts.

They would come from the people building, studying, and regulating AI every day.

What AI researchers are focused on

The problem of alignment is frequently discussed by AI researchers, particularly how to make AI systems act in ways that are in line with human desires.

This is not the case of machines becoming evil. It is all about avoiding errors, abuse and unpredictable acts as machines get smarter.

In other words, the concern is control, not consciousness.

How AI labs approach safety

Leading labs like OpenAI and DeepMind don’t operate in secret silos chasing unchecked intelligence.

They invest heavily in:

- Safety testing and red-teaming

- Human-in-the-loop systems

- Gradual, controlled deployment

Here the role of skilled AI consultants is vital, as it allows the organization to implement AI with a defined scope, responsibility, and control rather than unethical automation.

What governments are doing about it

Policymakers are not disregarding such risks either.

Frameworks like the EU AI Act and recent U.S. executive actions focus on:

- Transparency in AI systems

- Risk classification for high-impact use cases

- Keeping humans responsible for AI decisions

The goal isn’t to stop AI progress but to prevent reckless deployment.

The shared takeaway:

Among researchers, industrial leaders and even governments, there is a common thread:

Cautious optimism.

People are excited about AI potential with a clear vision of what it should not be given a chance to do without regulation. That balanced approach is a long way from the idea of a runaway, Terminator-style AI takeover.

How AI Safety Keeps a “Terminator” Scenario in Check

Imagining a terminator scenario in real life may be terrifying, but the thing is that AI is not going out of control. In order to ensure that AI remains a useful and not harmful solution, experts have created numerous safety systems.

Alignment Research

Researchers are concerned with ensuring AI adheres to human principles and goals. That is to say, AI can perform what we desire and not what it desires.

Reinforcement Learning with Human Feedback (RLHF)

This method allows human beings to control AI decisions in real time. Through feedback and correcting, AI improves at making safe predictable decisions, just as a student learning from a teacher.

Kill-Switches, Rate Limits, and Sandboxing

AI systems have emergency stop buttons, operational limits and controlled environments. Sandboxing also guarantees that experiments are safe and isolated such that nothing can suddenly leak into the actual world.

Model Auditing and Red-Teaming

AI is constantly scrutinized by teams to identify weak areas and possible risks. Through simulation of attacks or misuse situations, experts are able to identify issues at early stages and rectify them before they become threats.

All these layers of safety make a true Terminator artificial intelligence scenario extremely unlikely.

Read also – AI Document Processing: The Future of Document Management

Could a Terminator-Style AI Takeover Actually Happen?

Let’s be honest: the short answer is no, at least not like the movies show.

Here’s why, broken down clearly:

- No self-preservation instinct.

AI algorithms don’t “want” anything. They lack desires, ambitions, survival instincts. They simply do as they are programmed.

- No independent access to resources

AI can’t build factories, access energy, or acquire tools on its own. It relies entirely on humans to operate.

- No global AI system controlling everything.

The current AI is disjointed, with various systems serving different purposes, which are all managed either by humans or organisations. No single AI can ever “take over the world.”

Therefore, although it would make a great movie plot, the actual danger is not a Skynet-like revolt. The issue at hand is the way human beings apply AI algorithms and whether we do it responsibly.

What Comes Next? Creating a responsible AI Future

To prevent any Terminator-style nightmares, and concentrate on the potential AI risks in the real world, we must have a roadmap to responsible AI.

Here’s what that roadmap looks like:

- Better Governance

Clear rules and policies make sure AI is developed and used safely. Think of it as setting guardrails before the car hits top speed.

- Transparency Standards

Individuals are supposed to learn how AI systems make decisions. When algorithms are open and explainable, it’s easier to spot mistakes or biases before they cause harm.

- Global AI Collaboration

AI doesn’t respect borders and neither do its risks. Countries, researchers, and companies should exchange best practices and collaborate to avoid abuse.

- Public AI Literacy & AI Reconciliation

AI isn’t just for tech experts. The masses should be informed as to the capabilities and limitations of AI. This AI reconciliation, filling the gap between fear and facts, serves to assist all of us in distinguishing science fiction and reality and making informed decisions.

The takeaway:

By focusing on governance, transparency, collaboration, and public understanding, we’re not just avoiding fictional robot uprisings. We are building a world in which AI works to the advantage of society in a safe and responsible manner.

Ready to build AI that’s powerful, not perilous?

Our architects specialize in fail-safe, responsible AI systems. Let’s design yours.

Wrapping Up

The AI Terminator Scenario is largely fiction. AI is not self-conscious, it is an instrument, and its effects are dependent on our usage.

However, abuse may cause issues, but with well-defined regulations and human supervision, AI may be safe and helpful. As with any instrument of power, it carries both dangers and benefits and our decisions today will shape the future.

Curious to learn more? Check out our other AI articles to see how this technology is really shaping our world.

Frequently Asked Questions (FAQs)

A. A Terminator scenario is a hypothetical, end of the world situation involving future artificial intelligence (AI).

In such a case, AI becomes self-conscious or goes rogue. It then perceives humanity as a menace and attempts to eliminate us.

The concept is inspired by the Terminator films. In those movies, intelligent machines and robots such as Skynet seize control of automated weapons and wage a war against mankind.

A. Yes, The Terminator (1984) can be regarded as surprisingly correct in its predictions about the emergence of artificial intelligence.

Even star Arnold Schwarzenegger has stated that the fictional future of the film has begun to become real in several aspects.

The film did not accurately foresee the kind of technology we currently possess. Nevertheless, it did anticipate such major challenges as existential fears, ethical issues, and safety concerns related to autonomous AI systems.

Our Latest Insights