Home / Blog / Artificial Intelligence

A Practical Guide to Build AI Agents Enterprise Workflows Using APIs and LLMs

Building intelligent systems has become a core part of how enterprises operate. Recent data from McKinsey & Company shows that 65% of organizations are already using generative AI in at least one business function, reflecting a sharp rise in practical adoption. In addition, enterprise momentum is accelerating, with 78% of Global 2000 companies running at least one AI workload in production as of 2026, according to research.

This shift explains the growing focus on how to build AI agents enterprise teams can rely on. These systems combine LLM reasoning with API-driven execution, enabling automation that goes beyond analysis into real action.

From orchestrating workflows to integrating with business systems, AI agents are becoming operational tools rather than experimental features.

This guide breaks down the architecture, interaction models, components, and processes required to design, deploy, and scale enterprise-grade AI agents effectively.

Build AI agents your teams can rely on

If your business needs automation that holds up in production, we can help. From planning to deployment, we handle the full build process.

Understanding Building AI Agents Using APIs and LLMs

AI agents built with APIs and large language models combine reasoning with real-world execution. The model interprets instructions, plans a path, and triggers external systems through APIs. This setup turns a static model into an active system that can fetch data, update records, or complete workflows without manual steps.

Enterprises use this approach to automate processes that once required multiple tools and human coordination. The result is a system that can think through tasks, take action, and produce structured outputs that align with business operations.

Benefits of Building AI Agents Using APIs and LLMs for Enterprises

1. Autonomous Workflow Execution

AI agents execute complex, multi-step workflows without continuous human input, coordinating tasks across multiple systems through APIs. They interpret objectives, plan sequences, and adapt when conditions change. This reduces delays caused by manual handoffs, improves operational consistency, and ensures processes continue running even during peak demand or off-hours without supervision.

2. Dramatic Reduction in Transaction Costs

AI agents cut operational expenses by automating repetitive, rule-based tasks that typically require human effort. They reduce error rates, minimize rework, and speed up execution cycles. Over time, enterprises benefit from lower staffing overhead for routine operations, while reallocating human resources toward higher-value activities that require judgment and strategic thinking.

3. Breaking Down Data Silos

LLM-powered agents connect isolated systems by accessing and synchronizing data across APIs, enabling consistent information flow between departments. They eliminate bottlenecks caused by fragmented databases and manual data transfers. This unified access improves reporting accuracy, supports better decision-making, and ensures that all systems use the same up-to-date information across the organization.

4. 24/7 High-Fidelity Customer Support

AI agents provide continuous customer support by handling inquiries, retrieving account data, and executing service requests through backend systems. They go beyond scripted responses by performing real actions such as updating records or processing transactions. This improves response quality, reduces wait times, and maintains consistent service levels regardless of time or volume.

5. Infinite Scalability & Elasticity

AI agents scale automatically based on workload, handling fluctuations in demand without requiring additional staffing. They rely on cloud-based infrastructure and API throughput, allowing enterprises to process large volumes of requests simultaneously. This elasticity ensures stable performance during peak periods while maintaining cost efficiency during lower usage intervals.

6. Enhanced Accuracy and Self-Correction

AI agents improve reliability by validating outputs, cross-checking data sources, and retrying failed actions when necessary. They can detect inconsistencies, adjust parameters, and refine results before final delivery. This built-in feedback loop reduces operational errors, strengthens trust in automated systems, and ensures outputs align with business rules and expected outcomes.

The LLM + API Interaction Model

Understanding how LLMs interact with APIs clarifies how agents move from intent to execution. This model defines each stage of decision-making, ensuring that actions are structured, traceable, and aligned with the system’s capabilities.

1. Input & Context

The interaction starts with user input combined with contextual data such as previous conversations, system states, or retrieved knowledge. This context shapes how the LLM interprets intent, ensuring responses and actions align with both the immediate request and broader operational requirements or constraints.

2. Reasoning (The “Think” Step)

The LLM evaluates the input, identifies intent, and determines the sequence of actions required. It maps the problem to available tools, deciding whether to retrieve information, trigger APIs, or request clarification, ensuring decisions are structured and aligned with defined workflows.

3. Action (API Calls)

Based on its reasoning, the agent initiates API calls with structured parameters. These actions may include querying databases, updating records, or triggering external services. Proper formatting and validation ensure that each call is accurate and aligned with the intended operational outcome.

4. Observation

After executing actions, the agent analyzes API responses to determine whether the expected results were achieved. It checks for errors, missing data, or inconsistencies and uses this feedback to decide whether to proceed, retry, or adjust the approach to achieve better outcomes.

5. Final Output

The agent consolidates all processed information and results into a structured response. This output is tailored for clarity and usability, ensuring it meets the needs of users or downstream systems while reflecting the actions taken and the data retrieved during execution.

Also Read This: Exploring the Key Components of AI Agent Architecture

What Components Are Required to Build AI Agents with LLMs and API Integrations?

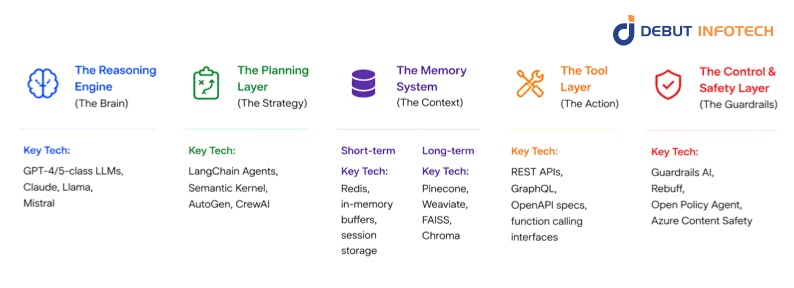

Building reliable AI agents with APIs requires a structured architecture where each component handles a specific responsibility, from reasoning and planning to execution, memory, and safety, ensuring consistent and controlled system behavior.

1. The Reasoning Engine (The Brain)

The reasoning engine interprets inputs, maps intent to actions, and generates structured decisions. It selects tools, constructs API calls, and adapts responses based on context and constraints. This layer of AI agent workflow design drives how the agent thinks, ensuring outputs remain aligned with defined objectives, policies, and expected operational behavior.

Key Tech: GPT-4/5-class LLMs, Claude, Llama, Mistral

2. The Planning Layer (The Strategy)

The planning layer breaks complex tasks into ordered steps, defines dependencies, and determines execution paths. It evaluates multiple approaches and selects the most efficient route, including fallback options when failures occur. This ensures workflows are structured, predictable, and capable of handling dynamic conditions during execution.

Key Tech: LangChain Agents, Semantic Kernel, AutoGen, CrewAI

3. The Memory System (The Context)

The memory system stores and retrieves contextual information across interactions, enabling continuity, personalization, and informed decision-making, while supporting both real-time session awareness and long-term knowledge retention for consistent agent performance.

I. Short-term

Short-term memory maintains session-level context, including recent inputs, outputs, and intermediate steps. It ensures continuity within a single interaction, allowing the agent to reference prior exchanges and maintain coherence while executing multi-step workflows without losing track of immediate objectives.

Key Tech: Redis, in-memory buffers, session storage

II. Long-term

Long-term memory stores persistent data such as user preferences, historical interactions, and domain knowledge. It supports personalization, improves decision-making, and enables the retrieval of relevant information across sessions, helping the agent deliver more accurate and context-aware outputs over time.

Key Tech: Pinecone, Weaviate, FAISS, Chroma

4. The Tool Layer (The Action)

The tool layer connects the agent to external systems through APIs, enabling real-world actions such as data retrieval, updates, or service execution. It defines available capabilities and ensures each action is performed using structured inputs, allowing the agent to interact reliably with the enterprise infrastructure for LLM API integration.

Key Tech: REST APIs, GraphQL, OpenAPI specs, function calling interfaces

5. The Control & Safety Layer (The Guardrails)

The control and safety layer enforces rules, validates outputs, and restricts unsafe actions. It ensures compliance with policies, prevents misuse of APIs, and introduces checks for sensitive operations. This layer is essential for maintaining trust, security, and reliability in enterprise-grade AI agent deployments.

Key Tech: Guardrails AI, Rebuff, Open Policy Agent, Azure Content Safety

Summary Table: The 5-Pillar Model

| Pillar | Focus | Why it’s needed |

| Reasoning | Intelligence | To understand what to do |

| Planning | Logic | To figure out how to do it |

| Memory | Context | To know what happened before |

| Tooling | Execution | To actually do the work |

| Control | Safety | To ensure it is done correctly |

Function Calling & API Orchestration

Function calling and orchestration define how API-based AI agents execute actions reliably across systems. They ensure API interactions are structured, coordinated, and adaptable, enabling efficient handling of both simple and complex workflows.

1. Function Calling

Function calling allows the LLM to trigger predefined operations with structured inputs. It ensures API interactions are precise and predictable.

2. Orchestration Patterns

I. Sequential

Tasks are executed step by step, where each action depends on the previous result. This suits workflows with clear dependencies.

II. Parallel

Multiple API calls run simultaneously. This improves efficiency when tasks are independent.

III. Self-Correction

The agent evaluates outcomes and retries or adjusts actions when errors occur, improving reliability.

Integration with Enterprise Systems

1. CRM Integration

AI agents integrate with CRM systems to access customer profiles, track interactions, and update records in real time. This enables automated follow-ups, personalized responses, and accurate data management, ensuring sales and support teams operate with consistent and up-to-date customer information across all touchpoints.

2. ERP & Logistics

Integration with ERP and logistics systems allows AI agents to manage inventory, process orders, and coordinate supply chain activities. They can retrieve stock levels, trigger shipments, and update order statuses, ensuring operations remain synchronized and reducing delays caused by manual coordination between departments.

3. Internal API Gateways

Internal API gateways provide a structured interface for AI agents to access enterprise services securely. They standardize communication across systems, enforce policies, and simplify AI agent integration. This ensures agents interact with internal infrastructure in a controlled manner while maintaining consistency and reliability across operations.

4. Authentication

Authentication mechanisms ensure that AI agents perform only authorized actions when interacting with enterprise systems. Techniques such as token-based access, role-based permissions, and secure key management protect sensitive data while maintaining seamless access to required services within defined security boundaries.

Scalability, Monitoring, and Production

Deploying AI agents at scale requires careful planning around performance, cost, and reliability. Here’s how systems are managed, monitored, and optimized to ensure consistent operation in production environments.

I. Rate Limiting & Cost Control

Rate limiting manages how frequently APIs and models are called, preventing overload and controlling operational costs. It ensures stable performance during high demand while avoiding unnecessary expenses from excessive requests. Proper limits help maintain system reliability and align usage with budget constraints in production environments.

II. Observability

Observability provides visibility into agent behavior, including decision paths, API calls, and system performance. It helps teams detect failures, analyze inefficiencies, and improve reliability.

Detailed logs and traces allow developers and AI consultants to understand how outputs are generated and ensure the system behaves as expected under varying conditions.

III. Human-in-the-Loop (HITL)

Human-in-the-loop systems introduce manual oversight for critical or sensitive actions. Agents can pause for approval, escalate decisions, or request clarification when uncertainty arises. This ensures accountability, reduces risk, and maintains control over operations where automated decisions alone may not meet business or compliance requirements.

IV. Evaluation (Evals)

Evaluation of AI agent frameworks measures the accuracy, reliability, and consistency of AI agent outputs across different scenarios. They test performance under real-world conditions, identify weaknesses, and guide improvements. Continuous evaluation ensures the agentic AI maintains quality standards and adapts effectively as workflows, data, and requirements evolve over time.

Also Read This: AI Agent Development Lifecycle

What Is the Process for Building Enterprise AI Agents Using APIs and LLMs?

Building enterprise AI agents follows a structured process that ensures clarity, reliability, and scalability. Each step focuses on defining capabilities, designing behavior, and validating performance before deployment into production environments. Here’s how to build an AI Agent with APIs and LLMs:

esearch

Step 1: Define the Scope and Toolset

Establish clear boundaries, objectives, and capabilities for the agent, ensuring alignment with business goals and identifying the required APIs, data sources, and model capabilities for execution.

I. Identify the Objective

Define the exact problem the agent will solve, expected outcomes, and measurable success criteria. This ensures AI agent development remains focused and avoids unnecessary complexity during implementation and scaling stages.

II. Inventory APIs

Catalog all available APIs, including endpoints, authentication methods, rate limits, and capabilities. This helps determine feasible actions and ensures the agent can interact reliably with existing enterprise systems.

III. Select the LLM

Choose a model based on accuracy, latency, cost, and compatibility with required tasks. Consider context length, reasoning ability, and integration support to ensure consistent performance in production environments.

Step 2: Create the Tool Definitions (JSON Schema)

Tool definitions structure how the agent interacts with APIs by specifying functions, parameters, and expected outputs, ensuring consistent execution and reducing ambiguity during model-driven decision-making and action selection processes.

I. Write Descriptions

Provide clear, concise descriptions of each tool, explaining when and how to use it. This helps the model select appropriate actions and reduces the likelihood of incorrect or irrelevant API calls.

II. Define Parameters

Specify required and optional parameters, including data types and constraints. Structured parameter definitions ensure accurate API requests and prevent errors caused by missing or incorrectly formatted inputs during execution.

Step 3: Design the Reasoning Loop

The reasoning loop defines how the agent processes input, plans actions, executes tasks, and evaluates results. A well-designed loop ensures consistent behavior, adaptability, and efficient handling of multi-step workflows.

I. Pick a Framework

Select a framework that supports AI agent orchestration, memory, and tool integration. This simplifies development, provides reusable components, and ensures scalability as the system evolves and grows in complexity.

II. System Prompting

Design system prompts that guide behavior, enforce constraints, and define response formats. Strong prompting ensures predictable outputs, aligns actions with business rules, and improves the agent’s overall reliability.

Step 4: Implement Memory and State Management

This step ensures the agent can retain context across interactions, manage session data, and retrieve relevant information when needed, enabling continuity, personalization, and more accurate decision-making during complex workflows.

I. Short-term Threading

Maintain session-level context by tracking recent interactions and intermediate steps. This allows the agent to handle multi-turn conversations and execute tasks without losing important details during ongoing processes.

II. Persistent State

Store long-term data such as user preferences, historical actions, and system states. Persistent storage ensures continuity across sessions and supports more informed and personalized responses over time.

III. Vector Retrieval (RAG)

Use retrieval systems to access relevant documents or data from large datasets. This enhances accuracy by grounding responses in real information, reducing reliance on model assumptions or incomplete knowledge.

Step 5: Build Guardrails and Approval Flows

Guardrails define rules, restrictions, and validation checks that ensure safe and compliant operation. Approval flows introduce oversight for sensitive actions, balancing automation with control in enterprise environments.

I. Human-in-the-Loop (HITL)

Incorporate human review for high-risk or uncertain actions. This ensures critical decisions are validated, reduces the risk of errors, and maintains accountability in workflows that require oversight or regulatory compliance.

II. Input/Output Filtering

Filter inputs and outputs to remove harmful, irrelevant, or invalid data. This improves response quality, enforces compliance policies, and prevents the agent from generating or acting on unsafe information.

Step 6: Testing, Tracing, and Deployment

This stage validates system performance, ensures reliability, and prepares the agent for production use. It includes testing workflows, monitoring behavior, and deploying in scalable environments with proper infrastructure support.

I. Observability Tracing

Track decision paths, API calls, and system performance in detail. Observability helps identify issues, optimize AI agent pipelines, and ensure transparency in how the agent processes inputs and generates outputs.

II. Evaluation Suites (Evals)

Run structured tests across various scenarios to measure accuracy, consistency, and robustness. Evaluation suites help identify weaknesses, guide improvements, and ensure the agent meets defined performance standards before deployment.

III. Containerization

Package the agent and its dependencies into containers for consistent deployment across environments. Containerization ensures scalability, simplifies updates, and supports reliable performance in distributed production systems.

Partner with experts to build AI agent enterprise solutions

Work with a team that understands both LLMs and enterprise systems. We help you design, build, and scale AI agents that fit your business reality.

Challenges of Building AI Agents Using APIs and LLMs for Enterprises (And How to Overcome Them)

1. Parameter Hallucination

LLMs can generate incorrect or fabricated parameters when calling APIs, especially when tool definitions are vague or the context is incomplete. This leads to failed requests, unintended actions, or inconsistent outputs. In enterprise environments, even minor parameter errors can disrupt workflows, corrupt data, or trigger incorrect transactions across connected systems.

Solution

A reputable AI development company will mitigate this by enforcing strict JSON schemas, validating inputs before execution, and using function calling with well-defined constraints. Add runtime checks to confirm parameter accuracy and reject invalid calls. Reinforce tool descriptions with clear usage rules, and implement retry logic with corrected parameters to ensure reliable API interactions and consistent outcomes.

2. Context Window Management

LLMs have limited context windows, which restrict how much information they can process at once. As workflows grow more complex, critical details may be truncated or omitted, leading to incomplete reasoning or incorrect decisions. This becomes problematic in enterprise settings where accuracy depends on large datasets, historical context, and multi-step interactions.

Solution

Use retrieval-augmented generation to fetch only relevant data instead of passing entire datasets. Apply summarization techniques to compress context while preserving key details. Maintain structured memory systems for session and long-term storage, ensuring the agent accesses necessary information efficiently without exceeding context limits or degrading performance.

3. The “Recursive Loop” Trap

AI agents can get stuck in recursive loops, repeatedly calling the same tools or re-evaluating the same logic without reaching a conclusion. This wastes resources, increases latency, and can lead to runaway costs. In production environments, such loops can degrade system performance and disrupt dependent workflows.

Solution

Introduce loop detection mechanisms, set maximum iteration limits, and define clear stopping conditions. Track previous actions to prevent repetition, and implement fallback strategies when progress stalls. Logging and monitoring help identify looping patterns early, allowing developers to refine logic and maintain efficient, predictable execution.

4. Non-Deterministic Behavior

LLMs can produce different outputs for the same input due to probabilistic generation. This variability creates inconsistencies in API calls, responses, and decision-making. In enterprise systems, where predictability is critical, non-deterministic behavior can lead to unreliable automation and make debugging or auditing processes difficult.

Solution

Control variability by adjusting temperature settings, enforcing structured outputs, and using deterministic prompting techniques. Add validation layers to verify responses before execution. Where consistency is critical, use predefined templates or rules to constrain outputs, ensuring stable and repeatable behavior across similar inputs and workflows.

5. Authentication & Identity

Managing secure access to APIs is complex, especially when tool-using AI agents interact with multiple systems requiring different authentication methods. Poorly managed credentials can lead to unauthorized actions, data breaches, or compliance violations. Enterprises must ensure that every action performed by the agent is properly authenticated and traceable.

Solution

Implement secure authentication methods, such as OAuth, API keys, and token-based access, with appropriate rotation policies for multi-agent systems. Use role-based access control to limit permissions based on task requirements. Maintain audit logs for all actions and integrate identity management systems to ensure secure, compliant, and traceable interactions across all connected services.

6. The “Dead End” Problem

Agents may encounter situations where no suitable tool or action is available to complete a task. This results in stalled workflows, incomplete outputs, or vague responses. In enterprise settings, such dead ends reduce reliability and can disrupt user trust, especially when tasks require precise execution across systems.

To resolve this, AI Agents Companies often design fallback strategies such as escalation to human operators, alternative workflows, or informative error handling. Expand tool coverage to handle edge cases and provide clear instructions when no action is possible. This ensures the agent remains useful, transparent, and capable of handling unexpected scenarios effectively.

Conclusion

Building intelligent systems that can reason and act is quickly becoming a core enterprise capability. To build AI agents enterprise teams can depend on, organizations need a structured architecture, reliable integrations, and strong control mechanisms. From planning and memory to orchestration and evaluation, each layer plays a role in ensuring consistent performance. When implemented correctly, AI agents move beyond automation into decision-driven execution, delivering measurable efficiency and scalability across business operations.

For enterprises looking to accelerate adoption, partnering with experts like Debut Infotech can simplify the journey. We offer custom AI agent development services. We also design scalable, API-driven solutions tailored to real business workflows. Our approach ensures secure integration, optimized performance, and faster deployment, helping organizations confidently build AI agents enterprise environments can trust and scale.

FAQs

A. AI agents are built by combining large language models like GPT-4 with APIs that let them interact with external tools. The model handles reasoning and language, while APIs fetch data or trigger actions. Developers define workflows, prompts, and logic so the agent can respond, decide, and act based on real-world inputs.

A. APIs act as the bridge between the LLM and external systems. The LLM, such as Claude, interprets user input and decides what action to take. APIs then execute those actions, like retrieving data or updating records. The agent loops this process, using results to refine responses and complete tasks accurately.

A. You connect AI agents to business systems by integrating APIs from tools like Salesforce or Stripe into an LLM framework. The agent uses these APIs to pull data or trigger workflows. Proper authentication, structured prompts, and error handling keep everything secure, reliable, and aligned with business operations.

A. To build AI agents enterprise teams rely on LLMs like GPT-4, connect them to APIs, and layer in business logic. You define workflows, add tools the agent can call, and control outputs with prompts and guardrails. Testing and iteration matter a lot, especially when handling real customer or operational data.

A. Common tools include frameworks such as LangChain and AutoGen, as well as APIs from providers such as OpenAI. You’ll also use vector databases, orchestration layers, and monitoring tools. Each piece handles memory, reasoning, or execution so the agent can actually do useful work beyond chatting.

A. Enterprises use AI agents for customer support, internal knowledge search, workflow automation, and sales assistance. For example, integrating with Salesforce lets agents manage leads or update records automatically. Others handle reporting, scheduling, or even basic decision-making tasks across departments.

A. Costs depend on how deep you go. A lightweight setup using APIs from OpenAI or models like Claude might cost in the low thousands per month. Once you add integrations, security layers, and scaling, enterprise builds can climb into tens of thousands, especially with ongoing API usage, infrastructure, and maintenance.

A. A simple agent can come together in 2–4 weeks if you’re using frameworks like LangChain. For enterprise setups, expect 2–4 months. That extra time goes into integrating systems, testing edge cases, tightening security, and making sure the agent behaves consistently in real business scenarios.

Our Latest Insights