Home / Blog / Artificial Intelligence

How to Build Enterprise AI Copilots: A Complete Guide for Business Leaders

The experimental stage of artificial intelligence is long past. Across boardrooms and operations centers in every major business, the question has switched from “should we explore AI?” to “how exactly do we build this the right way?” One of the most powerful implementations of this trend is the corporate AI copilot. This is an intelligent assistant that operates right inside your business workflows. It understands your confidential data and enables your teams to get significantly more done in less time.

Debut Infotech partners with enterprises at every step of the process on how to build AI copilot systems for their businesses. This guide provides everything decision makers and technical leads will need to know: architecture, tools, AI system integration, deadlines, and budgets.

Business leaders considering how to build enterprise AI copilots consulting engagements should know that strategic inputs impact technical outputs. It’s possible to get from a good demo to a copilot that really changes how your teams work, but you need the right process and the right partner to make it happen.

Ready to build AI Copilot capabilities that deliver measurable ROI?

Debut Infotech offers end-to-end enterprise AI solutions encompassing use-case research, AI architecture design, full implementation, AI system integration, and ongoing MLOps platform support.

Enterprise AI Copilot: What It Is and Why the Difference Matters

A business AI copilot is a conversational AI system that uses one or more large language models (LLMs) and integrates with internal data sources and business processes. It’s designed to provide accurate responses based on specific business data when employees ask questions about customer records, company policies, sales forecasts, or technical topics.

This is significantly different from a general-purpose AI helper. A generic assistant “knows” only what is in its training data. An enterprise AI copilot knows your organization, its documents, systems, customers, and workflows. That precision is what makes it really helpful.

The distinction is also important in comparing copilots with old-fashioned chatbots. A chatbot runs on pre-defined scripts and fails when a user goes off-script. A modern conversational AI and current LLM model development-based AI copilot can naturally handle open-ended natural language.

Why Are Enterprises Investing in AI Copilots Today?

According to a Gartner report, 40% of enterprise apps will include integrated AI copilots by the end of 2026, up from 5% in 2024. This is a structural shift in enterprise software, and companies that delay are losing ground to competitors that already have production systems.

The productivity case is solid. Sales copilots connected to CRM data cut time spent on admin tasks by 40%. Developer copilots boost coding productivity by 30 to 55%. Support copilots minimize resolution time by 30-50%. Legal review technologies reduce contract work by 40-60%. These are findings from enterprises that have accomplished full production deployments with sophisticated AI implementation services.

AI copilot can scale because it removes the most time-intensive friction in knowledge work: getting the right information at the right time. A good copilot does tasks correctly, immediately, and without becoming tired.

Where Enterprise AI Copilots Add the Most Value

| Use Case | Primary Benefit | Typical Productivity Gain |

| IT Helpdesk | Automated issue resolution and escalation | 30–50% reduction in ticket resolution time |

| HR Support | Policy Q&A, onboarding, benefits queries | 40–60% reduction in repetitive queries |

| Sales Assistance | CRM data retrieval, proposal drafting, briefing prep | 40% reduction in admin time |

| Finance Reporting | Automated data pull and report generation | 50–70% faster report production |

| Legal Review | Contract summarization and regulatory research | 40–60% faster review cycles |

| Software Development | Code generation, review, and documentation | 30–55% productivity improvement |

| Customer Support | Resolution suggestions, response drafting | 15–25% improvement in first-contact resolution |

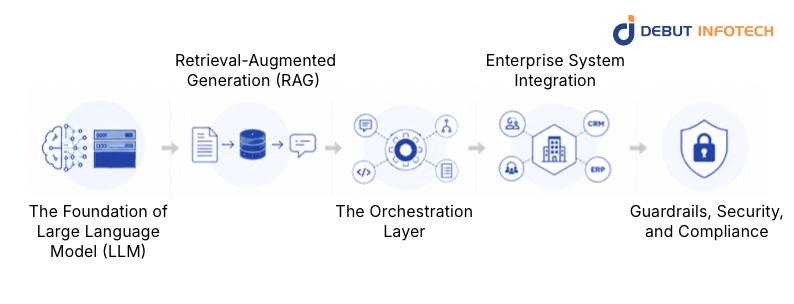

Key Aspects of a Production-Ready Enterprise AI Copilot

You want to understand the architecture before you write a line of code. When organizations work with competent AI development companies to build AI copilot systems, the first real effort is always architectural. That involves determining what to accomplish, how the system layers are connected, and the security and compliance boundaries.

1. The Foundation of Large Language Model (LLM)

All the parts of a system rely on the base LLM for their language understanding and generation capabilities. Choosing the proper model is a crucial part of AI architecture design, and it must not be based solely on names.

This should be determined by specific criteria such as:

- Accuracy requirements: High-stakes domains like finance and healthcare demand near-perfect factual reliability. AI algorithms and reasoning capabilities vary meaningfully between models on domain-specific benchmarks.

- Latency: Real-time assistant use cases require sub-second responses. Batch processing tasks have more flexibility.

- Data residency: Regulated industries often cannot send sensitive data to external model APIs. Open-source LLMs deployed on private infrastructure solve this through the appropriate AI deployment models.

- Cost: API-based models are billed per token. The infrastructure cost is higher, although self-hosted solutions can be cheaper for high-volume deployments.

When working with AI, expert AI consultants always follow this rule: match the model to your use case, not the other way around.

2. Retrieval-Augmented Generation (RAG)

RAG is arguably the most important single architectural decision in the entire build. The model generates a response grounded in your actual internal information rather than its general training data.

The impact is measurable. RAG-enabled copilots provide 40 to 60% more accurate answers than standalone LLMs for enterprise-specific questions. For high-stakes domains, RAG is not optional — it is the foundation of the entire system’s reliability. A production-grade RAG pipeline involves document ingestion from all relevant sources, semantic chunking of that content, embedding generation using models like OpenAI’s text-embedding-3-large or Cohere’s embed-v3, storage in a vector database, and semantic retrieval at query time.

Chunking at paragraph or section boundaries rather than fixed character counts improves retrieval accuracy by 15 to 25% — a detail that separates systems built by experienced AI development services teams from those assembled without that depth of applied knowledge.

3. The Orchestration Layer

This layer controls conversation, tool selection, and multi-step reasoning. To provide a unified response to a user’s request, the orchestration layer determines the best course of action, the proper sequence of operations, and how to collect the results.

Frameworks commonly used here include LangChain, LlamaIndex, and Microsoft’s Semantic Kernel. Each provides pre-built components for building complex agent workflows without reinventing core infrastructure.

In this step, an AI automation strategy moves from an idea to implementation. Companies that invest in effective orchestration design create copilots that autonomously complete multi-step business processes, not just single-turn requests.

4. Enterprise System Integration

An AI copilot that only reads static documents provides limited business value. The depth of its AI system integration with the tools your organization actually runs on determines whether the copilot becomes a daily-use productivity tool or a novelty that gets ignored after the first week.

Enterprise AI solutions for production usually integrate with:

- Custom internal applications

- CRM platforms

- ERP systems

- ITSM tools

- Collaboration tools

- HR platforms

5. Guardrails, Security, and Compliance

Enterprise AI security isn’t something you just tick off as a checklist item at launch. Compliance rules vary by business and area. GDPR applies to every organization that processes personal data of EU citizens. HIPAA is healthcare data in the United States, while SOC 2 and ISO 27001 are common baseline standards for an enterprise software environment. The EU AI Act is now fully in place, enforcing governance obligations for high-risk AI implementations.

The right approach is to embed all compliance requirements into the AI architecture from day one — retrofitting them after deployment is expensive, and in regulated sectors is often legally insufficient.

How To Build and Deploy Enterprise AI CoPilots Step-by-Step

This section answers the core question directly: What is the actual process that experienced enterprise AI development teams follow when building AI copilot systems from concept to production?

The steps below show the organized way that Debut Infotech uses with clients from different fields.

Stage 1: Strategic Discovery and Use Case Definition

The most important work in any enterprise AI project happens before any code is written. Strategic discovery involves working with business stakeholders to define with precision what the copilot needs to do, what data it needs access to, what success looks like in measurable terms, and what the compliance constraints are.

Experienced AI consultants will also conduct a data-readiness assessment during this phase. The quality of your RAG implementation depends entirely on the quality of the underlying knowledge base. Organizations with disorganized or outdated internal documentation need to address that before development begins. A copilot built on poor data will give unreliable answers regardless of which LLM powers it.

Stage 2: Selection of AI Architecture and Technology

With the use case defined and the data assessed, the next step is designing the full technical architecture. This covers LLM selection, RAG pipeline design, integration architecture, security model, and AI deployment models. Good AI architecture design at this phase saves months of costly rework later and prevents the technical debt that stalls enterprise AI projects at scale.

AI deployment models are decisions that affect cost, security, and operational complexity throughout the system’s lifetime. Three primary patterns exist in enterprise deployments:

| Deployment Model | Description | Best Suited For |

| Cloud API (Hosted LLM) | Use a hosted LLM API from OpenAI, Anthropic, or Google | Fast deployment, flexible scaling, standard data requirements |

| Self-Hosted Open Source | Deploy open-source LLMs on private cloud infrastructure | Strict data residency, high volume, regulated industries |

| Hybrid | Route general queries to cloud API, sensitive queries to the private model | Balancing cost, performance, and compliance requirements |

According to McKinsey, about 78% of enterprise AI deployments in 2026 use hybrid AI deployment models — a figure that reflects how well organizations now understand the trade-offs and design for them deliberately.

The full technology stack for a production enterprise AI copilot looks like this:

| Layer | Common Technologies |

| LLM | GPT-4o, Claude, Gemini 2.0, Llama 3, Mistral |

| Orchestration | LangChain, LlamaIndex, Semantic Kernel |

| Vector Database | Pinecone, Weaviate, Qdrant, pgvector |

| Embedding Models | OpenAI text-embedding-3-large, Cohere embed-v3, BGE-large |

| Backend API | Python (FastAPI), Node.js |

| Enterprise Integration | REST APIs, GraphQL, middleware, RPA for legacy systems |

| Cloud Infrastructure | AWS, Azure, GCP, Kubernetes |

| MLOps Platforms | MLflow, Weights & Biases, Langfuse, Arize |

| Security Layer | OAuth 2.0, RBAC, encryption, audit logging, prompt guards |

Stage 3: Data Preparation and the Knowledge Base Building

This phase is always the most underestimated in enterprise AI development initiatives. The process of building the knowledge base for the RAG pipeline entails gathering and cleaning documents, setting up ingestion pipelines, creating embeddings, and putting them into a vector store with metadata tagging.

This involves exporting documentation, processing data, synchronizing pipelines, and tagging for retrieval. Good prep is important for getting good answers. If you rush, you might get different replies. Credible AI services should value structured data preparation and vendors with experience.

Stage 4: Core Development and AI System Integration

Development begins with building orchestration logic, conversation management, and AI systems integration with enterprise platforms through APIs. AI copilots are consistently integrated with CRMs and ERPs by authenticating, defining operations, and registering them as callable tools.

Based on what users ask for, the LLM chooses which tools to use. Security restrictions (e.g. role-based access, metadata filtering) are enforced during development time to protect sensitive data.

Improving user satisfaction, answer quality, and shaping LLM responses all depend on prompt engineering, which has a big effect on the overall AI automation strategy.

Stage 5: Evaluation and Pilot Testing

For a full deployment, do a pilot with 20-50 people for 4-8 weeks. This will help you discover integration issues, user experience concerns, and accuracy gaps. The key metrics to track are accuracy against verified data, resolution rates, response times, and user satisfaction.

Get qualitative input on Copilot’s performance to adjust prompts & improve the system. LLMs are prone to wrong answers. Therefore, build a strong RAG process, human review of sensitive outputs, and automated fact-checking to ensure accuracy before delivering to consumers.

Stage 6: Full Deployment, MLOps Integration, and Continuous Improvement

The complete deployment of the copilot includes releasing through channels such as Slack, Teams, and mobile apps, and setting up MLOps infrastructure to support ongoing performance. MLOps platforms like MLflow and Arize monitor metrics and automatically notify of performance issues, ensuring continual improvement.

Dedicated engineers from AI development companies are vital for long-term ROI, and organizations should reflect this in their total cost of ownership, as ongoing maintenance is required.

Effective change management is also critical, as only 28% of employees feel confident adopting AI technologies. It’s all about clear communication, planned onboarding, and leadership support to drive acceptance.

How Enterprises Ensure Scalability, Security, and Accuracy in AI Copilot Systems

These three requirements come up in every serious enterprise AI engagement, and addressing them requires deliberate architectural choices — not general best-practice platitudes.

Scalability involves infrastructure and design. Cloud deployments on Kubernetes scale horizontally, while caching reduces LLM calls and costs. Asynchronous processing eases the load on inference systems. Mature AI algorithms for capacity planning and auto-scaling should be integrated from the start of production deployments.

Security is layered throughout the stack, with zero-trust architecture requiring authentication for all requests. End-to-end encryption secures data, while RBAC and metadata filtering restrict unauthorized access. Regular audits and penetration testing identify vulnerabilities.

Accuracy primarily relies on RAG quality and, secondarily, on prompt engineering. Reliable retrieval stems from clean, structured, and current documentation. High-quality embedding models, semantic chunking, and optimized retrieval settings enhance response accuracy. Human reviews for critical outputs help catch errors, while ongoing A/B testing of configurations and prompts, managed via MLOps platforms, enables continuous empirical improvement.

AI Copilot Development Cost and Timeline

One of the first questions every business leader asks is what it will cost and how long it will take to build AI copilot capabilities. The answer depends on scope, integration complexity, data readiness, and compliance requirements — but the following ranges reflect real-world project data from enterprise AI solutions deployments across industries.

Development Cost Ranges

| Project Scope | Estimated Cost Range | Typical Timeline |

| Single use case, cloud LLM, limited integrations | $45,000 – $150,000 | 6–12 weeks |

| Mid-complexity, 2–3 integrations, custom RAG pipeline | $150,000 – $400,000 | 3–6 months |

| Full enterprise deployment, multiple use cases, deep integrations | $400,000 – $1,500,000+ | 6–12 months |

| SaaS copilot platform subscription (commercial) | $200–$400 per user/year | 2–8 weeks setup |

Full production costs include data engineering, AI integration, compliance, and MLOps staffing, not just model API costs. A phased approach is recommended: deploy a well-defined use case, show measurable ROI, then expand. This approach de-risks the investment, builds organizational confidence, and generates the operational knowledge needed to scale AI deployment models effectively.

What to Look for in an AI Copilot Development Company

Not every organization has the internal engineering depth to build AI copilot systems from scratch. For those engaging an external AI copilot development company, the selection criteria matter as much as the vendor shortlist.

Look for a partner that demonstrates:

- Production track record: References from completed production deployments, not just proofs of concept

- Full-stack enterprise AI expertise: Capability across LLM integration, RAG architecture, AI system integration, cloud infrastructure, and AI architecture design — not just one layer

- Structured data readiness methodology: An honest assessment of your internal data before development begins, not a promise that the model will figure it out

- Compliance depth: Demonstrated experience in your specific regulatory environment — HIPAA, GDPR, SOC 2, ISO 27001, EU AI Act

- MLOps practices: A defined approach to post-deployment monitoring, drift detection, and continuous evaluation using MLOps platforms

- AI automation strategy: The ability to connect AI investments to measurable business outcomes, not just deliver technical specifications

Debut Infotech operates as a full-spectrum AI consulting company, covering everything from initial enterprise AI solutions strategy through to post-deployment MLOps support.

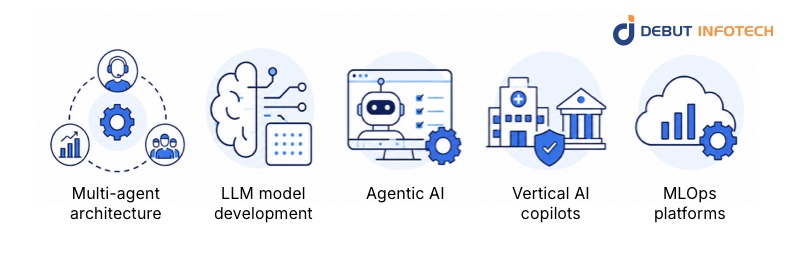

Key AI Trends Shaping Enterprise Copilot Development in 2026

For organizations planning deployments now, the following AI trends are worth building into your technical architecture and your broader AI automation strategy from the start.

- Multi-agent architectures are now standard for complex enterprise workflows, with specialized agents for functions such as IT support, finance, and HR, coordinated by an orchestration layer.

- LLM model development is progressing quickly, with 2026 models showing enhanced reasoning, reduced hallucination rates, and better handling of long contexts than those from 2024.

- Agentic AI signifies a significant advancement in enterprise copilot functionality, moving beyond simple question-answering to autonomously executing complex business processes. Organizations developing copilots should plan for agentic features in their AI systems integration, even if initial applications are limited in scope.

- Vertical AI copilots, tailored to specific industries, yield better results than general-purpose AI. In healthcare, organizations using clinical workflow copilots excel in documentation and patient coordination, while financial institutions benefit from regulatory-focused copilots for compliance tasks. The AI trends emphasizes the importance of domain specificity in AI development, highlighting the advantages of collaborating with experts in vertical markets.

- MLOps platforms are becoming crucial for production infrastructure. Initially, AI deployments overlooked MLOps, but by 2026, successful organizations will prioritize MLOps as key engineering investments. Proactive MLOps investment is far more cost-effective than reactive fixes for deteriorating AI systems.

Looking for an Experienced AI Copilot Development Company to Partner With?

Work with Debut Infotech’s team of AI engineers, enterprise architects, and AI implementation services specialists who have shipped production copilot systems across regulated and complex industries.

Conclusion

Building effective AI copilot systems for enterprise use is a critical competitive advantage for organizations. Understanding architecture, utilizing mature tools, and implementing proven AI deployment models are essential. Key aspects include disciplined execution—rigorous design, thorough data preparation, careful integration, structured piloting, and sustained MLOps.

Debut Infotech offers comprehensive capabilities, focusing on custom development, AI implementation services, compliance, and user-friendly systems. Their approach emphasizes measurable business outcomes to ensure ROI rather than mere technical innovation, aiding organizations from initial scoping to long-term production.

Frequently Asked Questions

A. To develop AI copilot systems for enterprises, select a suitable LLM, create an RAG pipeline linked to internal knowledge, and establish API integrations with various business systems. An orchestration layer manages data queries and actions for user requests.

A. Development costs for AI deployment can range from about $45,000 for a simple use case to over $1.5 million for a comprehensive enterprise solution. Key cost factors include data engineering, integration complexity, compliance architecture, and MLOps staffing, rather than LLM API costs.

A. A focused deployment of a cloud-based LLM for a single use case can be achieved in 6 to 12 weeks, while a full enterprise deployment typically takes 6 to 12 months. Experts recommend a phased approach: deploy one use case first, validate results, and then expand.

A. A production enterprise AI copilot consists of five core layers: an LLM foundation (such as GPT-4o or Claude), a RAG pipeline with a vector database, an orchestration framework, enterprise system integrations, and a security compliance layer. Additionally, MLOps platforms for ongoing monitoring are crucial, often overlooked until post-launch.

A. AI system integration with CRMs, ERPs, and internal tools is achieved through API connectors on the copilot’s orchestration layer. These integrations authenticate with targets, define callable operations, and enable a Large Language Model (LLM) to determine the sequence of tool invocations based on user requests.

A. Scalability is achieved through cloud infrastructure, including horizontal auto-scaling, content caching, and asynchronous processing, along with AI for capacity monitoring. Security employs a zero-trust network, end-to-end encryption, role-based access control, and prompt injection detection. Accuracy hinges on the quality of the RAG pipeline, well-structured documentation, semantic chunking, and high-quality embeddings.

A. A traditional chatbot relies on predefined scripts, struggles when users deviate from set paths, and lacks access to live data. In contrast, an enterprise AI copilot utilizes advanced conversational AI to understand natural language, maintain context, and integrate with live data systems.

Our Latest Insights