Home / Blog / Artificial Intelligence

AI Voice Generator Platform Development: All You Need To Know

AI Voice Generator platforms are reshaping how businesses communicate, automate, and produce audio content. The global AI voice generator market is projected to grow from roughly $3.0 billion in 2024 to $20.4 billion by 2030, reflecting rapid adoption across media, support, and automation applications.

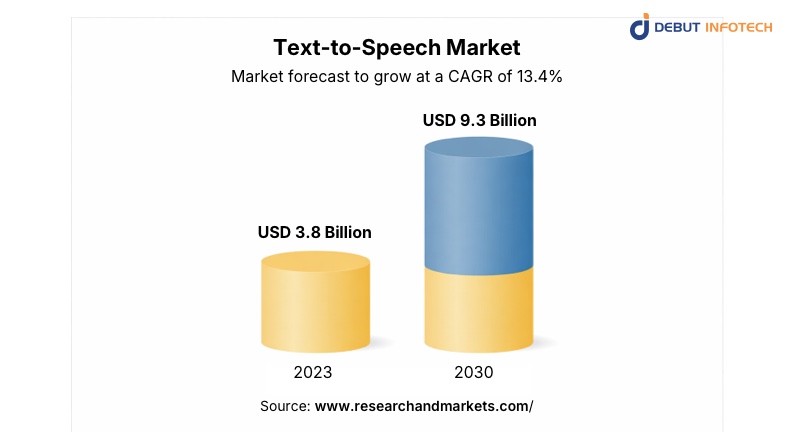

In parallel, the broader AI voice market is expected to hit $10.12 billion in 2025 and expand further in 2026, driven by voice assistants, NLP, and smart device integration. Text-to-speech technology alone could reach $9.3 billion by 2030 as demand for accessible and scalable audio content rises.

As organizations pursue natural, customizable speech solutions, AI voice generators are becoming central to customer experience, content efficiency, and global reach.

In this guide, we will explain what an AI voice generator platform development is, how it works, its benefits, key components, a step-by-step guide, and its future.

Launch Faster With a Proven Development Team

Avoid trial-and-error. Our team builds AI voice platforms with speed, stability, and long-term growth in mind.

Overview of an AI Voice Generator Platform

An AI voice generator platform converts written text into natural-sounding speech using machine learning models trained on human voice data. These platforms are used across customer support, media production, e-learning, accessibility tools, gaming, and marketing.

Modern solutions go beyond robotic speech. They produce voices with tone, pacing, emotion, and language accuracy that feel close to real human delivery. For businesses, this means faster content production, consistent voice output, and lower dependence on human voice talent.

What is AI Voice Generator Platform Development?

AI voice generator platform development is the process of designing, building, and deploying software that generates synthetic human speech from text or audio input. It involves training speech models, building APIs, enabling voice customization, and delivering audio output in real time or batch format.

The goal is to provide scalable, controllable, and realistic voice generation that fits business use cases such as IVR systems, audiobooks, chatbots, ads, and product walkthroughs.

How the AI Voice Generator Platform Works

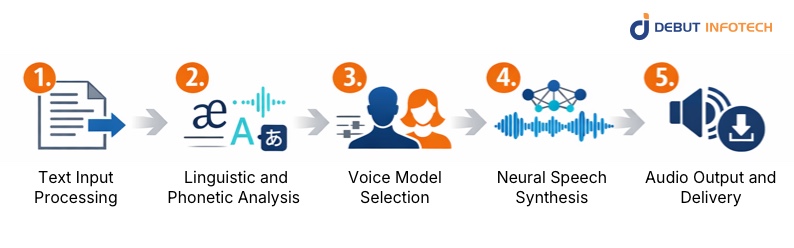

1. Text Input Processing

The platform receives raw text from users, APIs, or integrated applications. It analyzes punctuation, sentence structure, abbreviations, and formatting to determine how the text should be spoken. This stage ensures the input is properly structured before speech generation begins.

2. Linguistic and Phonetic Analysis

The system breaks text into phonemes while applying pronunciation rules, stress patterns, and intonation logic. It considers language-specific nuances, accents, and contextual meaning to determine how words should sound when spoken naturally, rather than following rigid dictionary pronunciations.

3. Voice Model Selection

A predefined or custom-trained voice model is selected based on user preferences. This model defines characteristics such as gender, accent, pitch, and speaking style. Advanced platforms allow dynamic switching between voices to suit different content types or audience expectations.

4. Neural Speech Synthesis

Deep learning models convert phonetic data into audio waveforms using neural text-to-speech techniques. These models simulate human speech patterns, including pauses, emphasis, and rhythm, producing speech that sounds fluid rather than mechanical or overly uniform.

5. Audio Output and Delivery

The generated voice is delivered either in real time or as downloadable audio files. Platforms support multiple formats and streaming options, enabling seamless use across web apps, mobile devices, IVR systems, and media production workflows without additional processing steps.

Related Read – How Voice-Activated Chatbots Are Transforming Customer Engagement

Key Components of an AI Voice Generator Platform

1. High-Quality Voice Synthesis

This component focuses on producing speech that sounds clear, natural, and human-like. It minimizes robotic artifacts and pronunciation errors while maintaining consistent tone across long-form audio. High-quality synthesis is critical for professional use cases like media, education, and customer-facing applications.

2. Real-Time Voice Generation

Real-time voice generation enables instant speech output with minimal latency. This is essential for conversational interfaces such as chatbots, virtual assistants, and call-automation systems, where delays can disrupt the user experience and reduce the effectiveness of voice-driven interactions.

3. Voice Customization

Voice customization allows users to adjust pitch, speed, tone, pauses, and emphasis. Some platforms also support style presets like formal, conversational, or expressive. These controls help businesses align generated voices with brand identity and specific audience expectations.

4. Text-to-Speech Integration

Text-to-speech integration enables seamless conversion of written content into spoken audio across applications. It supports APIs, dashboards, and third-party tools, allowing AI development companies to embed voice generation into websites, apps, learning platforms, and enterprise systems without complex setup.

5. Speech Emotion Recognition

Speech emotion recognition analyzes context and emotional cues within text to apply appropriate vocal expression. This feature helps generate voices that sound empathetic, upbeat, calm, or serious, improving engagement in customer support, storytelling, and interactive voice applications.

6. Multilingual Support

Multilingual support allows the platform to generate speech across multiple languages and accents using a unified system. It ensures correct pronunciation, grammar, and cultural tone without rebuilding AI models for each market, enabling faster, more cost-effective global deployment.

7. Audio File Export

Audio file export enables users to download generated speech in standard formats like MP3 or WAV. This makes it easy to reuse voice outputs across ads, videos, podcasts, training materials, and offline applications without having to regenerate audio each time.

Reasons to Invest in AI Voice Generator Platform Development

1. Cost-Effectiveness

AI voice generator platforms significantly reduce long-term audio production costs by eliminating the need for repeated studio sessions, voice actor fees, and post-production expenses. Once deployed, the system generates unlimited voice content on demand. This makes it easier for businesses to scale audio output while keeping operational spending predictable and easier to manage.

2. Scalability and Flexibility

An AI voice generator platform scales instantly as content demands increase. Whether generating hundreds or millions of voice outputs, performance remains consistent. Businesses can add new languages, voices, or use cases without rebuilding the platform, making it suitable for startups and enterprises planning steady or rapid growth.

3. Level-Up Customer Experience

Consistent, natural-sounding voice interactions improve how users perceive digital products. AI-generated voices provide clear, friendly, and responsive communication across touchpoints like support lines, onboarding flows, and virtual assistants. This consistency reduces friction, improves engagement, and helps users complete tasks faster without confusion or fatigue.

4. Multilingual Capabilities

AI voice platforms support multiple languages and regional accents from a single system. This removes the need for separate voice teams or localized production workflows. Businesses can enter new markets faster while maintaining consistent quality and tone, making global expansion more practical and less resource-intensive.

5. Faster Content Production

Voice content that once took days to script, record, and edit can now be produced in minutes. Marketing teams, educators, and product teams can iterate quickly, test variations, and update audio content instantly, without scheduling delays or reliance on external production timelines.

6. Brand Voice Consistency

AI voice generators allow companies to define a consistent brand voice and apply it across all audio outputs. This ensures uniform tone, pacing, and delivery in ads, support systems, and digital products. A consistent audio identity strengthens brand recognition and builds trust with users over time.

Also Read – AI Text-to-Speech in Business – A Complete Guide

Step-by-Step Guide for Developing an AI Voice Generator Platform

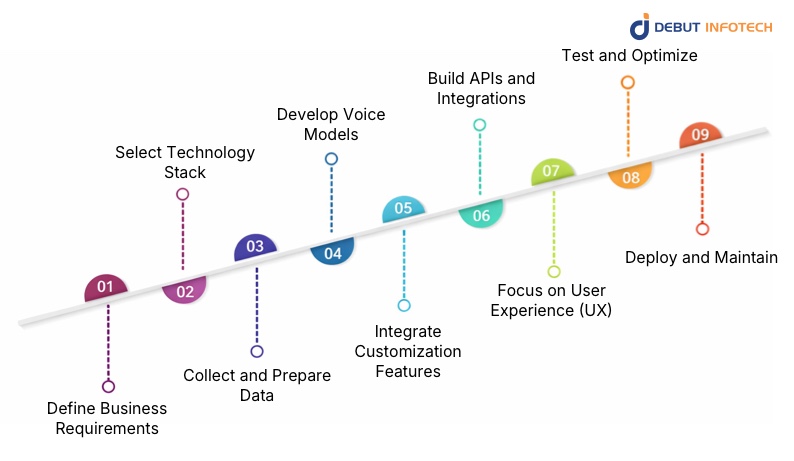

Development follows a structured sequence that moves from planning and model training to integration, testing, deployment, and long-term optimization. Here is a step-by-step guide:

1. Define Business Requirements

Start by identifying clear use cases, target users, supported languages, and performance expectations. Decide whether the platform will serve real-time conversations, content production, or both. This step aligns technical decisions with business goals and prevents costly redesigns later in development.

2. Select Technology Stack

Choose AI frameworks and tools for speech synthesis, model training, APIs, and cloud deployment. Decisions should account for scalability, latency requirements, and long-term maintenance. Popular stacks often combine deep learning libraries, cloud GPUs, and microservices to support efficient voice generation workflows.

3. Collect and Prepare Data

Gather high-quality voice datasets with proper consent and usage rights. Clean the data by removing noise, inconsistencies, and pronunciation errors. Labeling and normalization are critical at this stage, as model accuracy and naturalness depend heavily on the quality of the training data.

4. Develop Voice Models

Train or fine-tune neural free text to voice ai generator models using prepared datasets. This process focuses on achieving natural pronunciation, rhythm, and pacing. Multiple iterations are usually required to improve clarity and reduce artifacts, especially when supporting numerous voices, accents, or emotional variations.

5. Integrate Customization Features

Add controls that allow users to adjust pitch, speed, tone, pauses, and speaking style. Customization makes the platform usable across different industries and content types. Well-designed controls also reduce the need for retraining models for minor voice adjustments.

6. Build APIs and Integrations

Develop secure APIs that allow applications to send text and receive audio outputs seamlessly. Integrations should support web apps, mobile apps, and enterprise systems. Clear documentation and stable endpoints make it easier for developers to adopt and scale the platform efficiently.

7. Focus on User Experience (UX)

Design an interface that simplifies voice generation for both technical and non-technical users. Dashboards should make voice selection, customization, and exports intuitive. A well-thought-out UX reduces onboarding friction and improves adoption across teams and use cases.

8. Test and Optimize

Test the AI voice generation platform for audio quality, pronunciation accuracy, latency, and system stability. Real-world testing across languages and devices helps identify weaknesses. Continuous optimization improves model performance, reduces processing delays, and ensures consistent voice output under varying workloads.

9. Deploy and Maintain

Deploy the platform using scalable cloud infrastructure with monitoring in place. Ongoing maintenance includes updating AI algorithms, fixing bugs, and improving voice quality. Regular updates ensure compatibility with new devices, languages, and evolving business requirements over time.

Legal & Ethical Considerations in AI Voice Generator Platform Development

1. Consent

Voice data used for training or cloning must be collected with clear, informed consent from the original speaker. This includes defining how the voice will be used, stored, and distributed. Transparent consent practices help prevent misuse, reduce legal risk, and protect individuals from unauthorized voice replication.

2. Copyright

Generated voices must not imitate or reproduce copyrighted or recognizable voices without permission. Using protected speech patterns, performances, or recordings can lead to legal disputes. Platforms should establish clear licensing policies and safeguards to prevent voice models from infringing on intellectual property rights.

3. Security

AI voice platforms handle sensitive data, including voice samples, generated audio, and user inputs. Strong encryption, access controls, and secure APIs are necessary to prevent data leaks or malicious misuse. Security measures also protect against voice spoofing and unauthorized manipulation of generated audio.

How to Choose the Right AI Voice Generator Platform Development Company

1. Proven Speech AI Experience

Choose a company with hands-on experience building text to speech models and voice-based systems. Their portfolio should demonstrate real-world deployments, not just prototypes. Practical experience reduces development risk and ensures the team understands challenges like latency, pronunciation accuracy, and voice consistency.

2. Customization and Scalability Capability

The right partner should offer flexible solutions that adapt to your business needs. This includes support for custom voices, multilingual output, and future feature expansion. A scalable architecture ensures the platform can grow without performance issues or major rewrites.

3. Strong Legal and Compliance Awareness

A reliable development company offering custom AI app development services understands the consent, copyright, and data protection requirements associated with voice technologies. They should implement safeguards that prevent misuse and ensure compliance from the start. This reduces legal exposure and builds long-term trust in the platform.

4. Post-Launch Support and Optimization

Development does not end at deployment. Look for a company that provides ongoing monitoring, model improvements, and technical support. Continuous optimization helps maintain voice quality, adapt to new use cases, and keep the platform competitive as user expectations evolve.

Partner With Experts in AI Voice Platform Development

Work with a team that understands voice models, APIs, and real-world deployment challenges.

Future of AI Voice Generator Platform Development

Ongoing advancements continue to refine realism, adaptability, and integration depth across voice-driven systems and intelligent applications. Here are some trends to watch out for:

1. More Human-Like Emotional Range

Future voice models will handle subtle emotional shifts such as hesitation, warmth, urgency, and empathy. These improvements will allow generated voices to adapt to conversational context rather than sounding emotionally flat. This will be especially important for customer support, storytelling, and interactive voice-driven applications.

2. Personalized Voice Models

Businesses will increasingly create custom voice identities tailored to their brand or product. These models will reflect specific tone, pacing, and personality traits. Personalized voices help brands stand out while maintaining consistency across customer interactions, marketing content, and digital products without relying on external voice talent.

3. Real-Time Multilingual Switching

Advanced platforms will support instant language and accent switching during live conversations. This capability allows seamless communication across diverse audiences without restarting sessions or changing systems. It will improve global customer support, virtual assistants, and voice-based interfaces operating across multiple regions.

4. Voice Cloning with Strong Safeguards

Voice cloning technology will continue to advance while incorporating stricter consent controls and usage limitations. Platforms will include authentication layers, audit trails, and watermarking to prevent misuse. These safeguards will allow responsible voice replication while protecting individuals and brands from impersonation risks.

5. Deeper Integration with AI Agents

AI voice generators will increasingly serve as the spoken interface for autonomous AI agents. These agents will reason, decide, and respond verbally in real time. Tight integration will enable more natural conversations, hands-free workflows, and voice-first applications across business operations and consumer services.

Building Voices That Actually Sound Human

Debut Infotech is a leading Enterprise AI development company that builds AI voice generator platforms designed for real-world use. Our team focuses on natural speech quality, low latency, and flexible customization that aligns with business workflows.

From multilingual text-to-speech engines to secure, scalable APIs, their solutions are built to grow with product demand. Strong attention to data ethics, consent handling, and long-term maintainability makes us a reliable partner for businesses serious about voice-driven products.

Conclusion

AI Voice Generator platform development is transforming digital communication and automation by offering scalable, natural-sounding voice solutions. Businesses that invest in this technology gain faster content production, flexible integration, and improved audience engagement. With the market expanding rapidly and future innovations promising even greater realism and customization, AI voice systems will continue to shape how users interact with digital products.

FAQs

A. AI voice platforms are safe when built with licensing clarity, data protection, and misuse controls. Commercial-ready systems include consent-based voice training, watermarking options, access controls, and compliance with data regulations, helping prevent legal issues and unauthorized voice cloning.

A. Companies building audiobooks, virtual assistants, call center tools, e-learning products, accessibility solutions, or media automation platforms often need AI voice generators. Startups also use them to replace manual voiceovers, speed up content production, and maintain consistent voice output across apps and channels.

A. Most AI Voice Generator Platform Development projects take 10-18 weeks. Timelines depend on voice complexity, number of languages, real-time requirements, and integrations. Custom voice training and enterprise-grade security can extend development if not planned early.

A. AI Voice Generator software typically costs between $40,000 and $120,000 to build. Pricing depends on model licensing, custom voice training, infrastructure scale, and feature depth. Advanced emotion control, real-time streaming, and enterprise deployment push budgets higher.

A. Yes. Well-built platforms support multiple languages, regional accents, and voice styles. This requires diverse training datasets and proper model architecture. Language expansion is easier when planned early, since retrofitting multilingual support later often increases costs and development time.

Our Latest Insights