Home / Blog / Artificial Intelligence

Private LLM Deployment: A Complete Guide for Secure Enterprise AI

Private LLM deployment has emerged as a practical response to growing enterprise concerns around data control, security, and compliance. As large language models move into core business operations, organizations are shifting from public APIs to controlled environments where sensitive data remains internal. Adoption trends support this shift: over 78% of organizations now use AI in at least one business function, with generative AI reaching widespread enterprise usage, per a McKinsey survey.

In addition, data privacy is cited by 53% of enterprises as the top barrier to scaling AI, highlighting why private deployments are gaining traction, according to reports.

This article explains how private LLM deployment works, its benefits and limitations, architectural considerations, deployment models, security frameworks, and real-world use cases across industries.

Turn AI Ideas into Production Systems

Got a use case in mind? We take it from concept to a fully deployed private LLM system. You get a working solution, not just plans and promises.

What is Private LLM Deployment?

Private LLM deployment refers to running large language models on infrastructure owned or tightly controlled by an organization, rather than relying on public APIs from external providers. This setup can be on-premise, in a private cloud, or within a secured hybrid environment.

The core idea is simple: keep model execution, data processing, and system integration inside controlled boundaries. That control changes how data flows, how models are tuned, and how risks are managed.

It is commonly used in sectors where confidentiality, compliance, and operational independence carry weight.

Benefits of Private LLMs Deployment

Private LLM deployment offers clear advantages for organizations that need tighter control, stronger security, and better alignment with internal systems. These benefits become more evident as AI adoption scales across enterprise operations.

1. Full control over sensitive data

Private LLM deployment gives organizations direct control over how sensitive data is stored, processed, and accessed throughout its lifecycle. Data never leaves controlled infrastructure, which limits exposure to third parties. This approach strengthens internal governance, supports strict data handling policies, and ensures that confidential business, customer, or operational information remains protected at all times.

2. Flexibility through open-source models

Using open-source models allows teams to modify architectures, fine-tune outputs, and adapt models to domain-specific needs without vendor constraints. This flexibility supports experimentation and continuous improvement. Organizations can align models with internal terminology, workflows, and datasets, resulting in more relevant outputs that match business requirements rather than relying on generalized, external systems.

3. Safe integration with internal systems

Private LLMs can be embedded directly into enterprise environments, enabling secure interaction with internal databases, tools, and applications. This reduces reliance on external APIs and minimizes data exposure risks.

Teams can design tightly controlled workflows where the model operates within predefined boundaries, ensuring sensitive operations remain isolated and aligned with internal security protocols.

4. Easier regulatory compliance

Organizations operating in regulated industries benefit from greater control over how data is processed and stored.

Private AI deployment models make it easier to meet legal and industry requirements related to data residency, auditability, and privacy. Teams can configure systems to align with specific compliance standards while maintaining clear visibility into how data flows across the environment.

5. Reduced long-term cost

Although initial setup requires investment in infrastructure and expertise, private LLM deployment can lower long-term expenses for organizations with consistent, high-volume usage. Eliminating recurring API fees leads to more predictable cost structures.

Over time, optimized infrastructure and efficient resource allocation can further improve cost efficiency, especially for large-scale enterprise applications.

6. Stronger security and smaller attack surface

By limiting external connectivity, private LLM deployments reduce exposure to potential threats and unauthorized access points.

Systems can be configured within secure network boundaries with strict access controls. This reduces reliance on third-party services and enables organizations to implement tailored security measures that align with internal risk management strategies and operational requirements.

Related Read: 10 Best Large Language Model Development Companies in the USA

Limitations of Private LLMs Deployment

Despite its advantages, private LLM deployment introduces operational and financial challenges that organizations must evaluate carefully. These limitations often influence whether a fully private approach is practical or if a hybrid AI automation strategy is more suitable.

1. Ongoing maintenance and administration

Private LLM systems require continuous oversight, including updates, performance tuning, and infrastructure management. Teams must handle software patches, model retraining, and hardware maintenance internally. This creates a steady operational workload that demands skilled personnel or large language. model development company and structured processes to ensure the system remains reliable, secure, and aligned with evolving business requirements.

2. Greater operational complexity

Deploying private LLMs involves managing multiple components, including compute resources, orchestration layers, data pipelines, and security systems. Coordinating these elements increases system complexity and requires experienced engineering teams.

Organizations must also ensure smooth AI system integration with existing infrastructure, which adds to the planning, execution, and long-term management effort.

3. High infrastructure and operating costs

Running LLMs at scale requires significant investment in hardware, particularly GPUs, as well as storage and networking resources. Operational costs include energy consumption, cooling, and system upgrades. For smaller organizations, these costs can be difficult to justify, especially when compared to the lower entry cost of using externally hosted AI services.

4. No access to top models

Some of the most advanced proprietary LLM models remain restricted to managed platforms and are not available for private deployment. This limits access to cutting-edge capabilities and ongoing improvements offered by leading providers. Organizations may need to rely on open-source alternatives, which can require additional effort to match performance and maintain competitive output quality.

Read more – A Comprehensive Review of Parameter-Efficient Fine-Tuning

Choosing the Right Architecture for Your Private LLM Deployment

Selecting the right architecture determines how effectively a private LLM deployment performs under real-world conditions. Key decisions around infrastructure, model design, and use cases directly influence scalability, cost, and output quality.

1. Compute availability

Compute capacity shapes what is feasible from day one. Evaluate GPU types, memory bandwidth, storage speed, and network throughput, then map them to expected workloads. Consider peak versus average demand, redundancy for failures, and future expansion.

Capacity planning should include inference scaling, batch processing needs, and cost controls to avoid underutilization or performance bottlenecks.

2. Target use cases

AI architecture design decisions should reflect how the model will be used in practice. Customer support, document analysis, and code assistance each require different response patterns, latency thresholds, and data access levels.

Define input types, expected outputs, and integration points early. This ensures the system design supports real operational needs rather than forcing adjustments after deployment.

3. Model size vs latency tolerance

Larger models tend to deliver stronger reasoning and language quality but require more compute and introduce slower response times. Smaller models offer faster inference and lower costs but may reduce output accuracy. Match the model size to acceptable latency for each use case, and consider techniques such as quantization or distillation to balance performance and efficiency.

4. Training from scratch vs fine-tuning a pre-trained model

Training from scratch demands extensive datasets, long training cycles, and substantial compute investment. LLM fine tuning a pre-trained model is more practical for most organizations, allowing faster deployment and lower cost. It also enables domain adaptation using internal data, improving relevance without rebuilding foundational capabilities that are already well established in existing models.

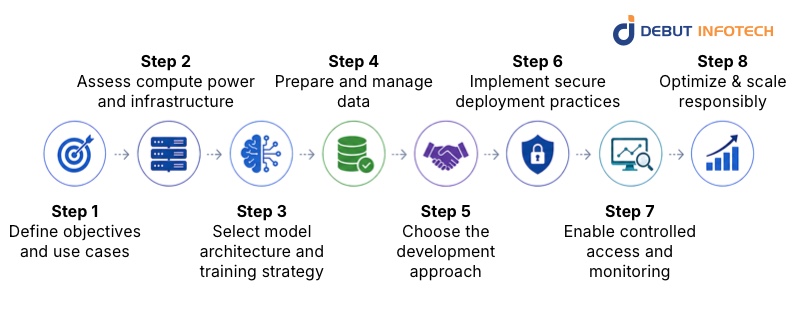

How to Build and Deploy a Private LLM: Step-by-Step Guide

Building a private LLM deployment requires a structured approach that aligns technical execution with business goals. Each step plays a role in ensuring the system is secure, scalable, and capable of delivering consistent results.

Step 1. Define objectives and use cases

Start with a clear definition of business goals and measurable outcomes. Identify the specific tasks the model will handle, the expected accuracy levels, and the user interactions. Outline data sources and constraints. This step ensures alignment between technical implementation and business value, preventing unnecessary complexity and helping teams focus on use cases that deliver meaningful impact.

Step 2. Assess compute power and infrastructure

Review existing infrastructure to determine whether it can support training and inference workloads. Evaluate GPU availability, storage capacity, and network performance. Consider scalability options and redundancy for reliability. This assessment helps avoid performance gaps and ensures the environment can handle both current demands and future growth without major redesign.

Step 3. Select model architecture and training strategy

Choose an artificial intelligence model architecture that aligns with task complexity, dataset size, and performance expectations. Decide whether to fine-tune an existing model or adapt it using parameter-efficient methods. Factor in training time, cost, and deployment constraints. A well-matched architecture improves output quality while keeping resource usage within manageable limits.

Step 4. Prepare and manage data

Data preparation directly influences model performance. Clean, structure, and label datasets to ensure consistency and accuracy. Remove sensitive or irrelevant information where necessary. Establish data governance practices, including versioning and access control. High-quality data reduces errors, improves reliability, and ensures the model reflects the organization’s knowledge and operational context.

Step 5. Choose the development approach

Decide whether to build internally or collaborate with specialized partners or AI implementation services.

In-house development offers control but requires skilled teams and longer timelines. External support can accelerate delivery and reduce technical risk.

The choice should reflect available expertise, budget constraints, and the urgency of deployment, ensuring a balanced approach to execution.

Step 6. Implement secure deployment practices

Security must be integrated across infrastructure, application layers, and data pipelines. Use encryption for data in transit and at rest, enforce network isolation, and apply strict access controls. Regular security audits and vulnerability assessments strengthen resilience.

A secure deployment framework protects sensitive data and supports compliance with internal and external requirements.

Step 7. Enable controlled access and monitoring

Access to the model should be governed through role-based permissions and authentication mechanisms. Implement logging systems to track usage, detect anomalies, and maintain accountability.

Monitoring tools should provide visibility into performance, errors, and system health of the enterprise AI solutions. This ensures the model operates within defined limits while allowing teams to respond quickly to issues.

Step 8. Optimize and scale responsibly

Post-deployment, focus on improving efficiency and maintaining performance under increasing demand. Apply optimization techniques such as model compression and caching. Scale infrastructure based on usage patterns rather than assumptions.

Continuous evaluation helps balance cost, speed, and accuracy, ensuring the system remains effective as requirements evolve over time.

What are the Different Private LLM Deployment Models for Enterprise Systems?

Enterprise deployment models vary based on control, scalability, and cost considerations. Choosing the right model depends on how organizations balance security requirements with operational flexibility and infrastructure capabilities.

1. On-Premise Deployment

On-premise deployment places the entire LLM stack within an organization’s physical infrastructure, offering maximum control over data, systems, and access. It is commonly adopted by sectors that handle highly sensitive information, where external exposure is not acceptable. This model supports strict governance and predictable environments but demands significant investment in hardware, expertise, and long-term infrastructure planning to maintain performance and reliability.

Pros

- Complete data ownership and control

- Strong security with isolated environments

- Full customization of infrastructure and workflows

- No reliance on external vendors

- Easier alignment with strict compliance requirements

Cons

- High upfront capital expenditure

- Requires skilled internal teams for maintenance

- Limited scalability without additional hardware investment

- Longer setup and deployment timelines

2. Private Cloud Deployment

Private cloud deployment runs LLMs on dedicated cloud infrastructure with restricted access, combining control with scalability. It allows organizations to leverage cloud flexibility while maintaining a higher level of privacy than public cloud options. This model supports faster deployment and easier resource scaling, making it suitable for businesses that need performance without managing physical infrastructure directly.

Pros

- Scalable compute and storage resources

- Reduced hardware management burden

- Faster deployment compared to on-premise setups

- Built-in redundancy and disaster recovery options

- Flexible resource allocation based on demand

Cons

- Ongoing operational and subscription costs

- Partial dependency on cloud providers

- Less granular control compared to on-premise

- Data residency concerns in certain regions

3. Hybrid Deployment

Hybrid deployment combines on-premise infrastructure with private cloud resources, allowing organizations to balance control and scalability. Sensitive workloads can remain on-premise, while less critical processes run in the cloud. This model supports flexible workload distribution and cost optimization but requires careful planning to manage integration, data flow, and consistent governance across environments.

Pros

- Balanced control and scalability

- Optimized cost by distributing workloads strategically

- Flexibility to handle varying performance demands

- Improved resilience through multi-environment setup

- Ability to keep sensitive data on-premise

Cons

- Increased architectural complexity

- Integration and data synchronization challenges

- Requires strong governance across environments

- Higher operational coordination effort

Deployment Models Comparison Table

| Feature | On-Premise Deployment | Private Cloud Deployment | Hybrid Deployment |

| Control Level | Full control over infrastructure and data | Moderate control with provider-managed layers | High control for sensitive workloads, shared elsewhere |

| Scalability | Limited, requires hardware expansion | Highly scalable with on-demand resources | Scalable through the cloud while retaining local capacity |

| Cost Structure | High upfront, lower recurring costs | Lower upfront, continuous operational expenses | Mixed cost model with balanced spending |

| Security | Maximum security with isolated systems | Strong, but shared responsibility model applies | Strong, requires coordinated security policies |

| Compliance Alignment | Easier for strict regulatory environments | Depends on provider compliance certifications | Flexible compliance handling across environments |

| Deployment Speed | Slower due to hardware setup | Faster with pre-configured cloud environments | Moderate, depends on integration complexity |

| Integration Complexity | Lower within internal systems | Moderate with external integrations | Higher due to cross-environment coordination |

| Performance Consistency | Highly predictable and stable | Varies based on cloud resource allocation | Balanced, with critical workloads stabilized locally |

| Maintenance Effort | High, requires dedicated internal teams | Moderate, shared with provider | High, due to dual environment management |

| Customization | Extensive customization capabilities | Limited to provider-supported configurations | High customization for on-prem components |

| Vendor Dependency | None | High dependency on the cloud provider | Partial dependency depending on workload distribution |

| Use Case Fit | Best for highly sensitive and regulated data | Ideal for scalable, flexible enterprise needs | Suitable for mixed sensitivity and dynamic workloads |

Industrial Use Cases of Private LLMs Deployment

Private LLM deployment is already delivering value across multiple industries by enabling secure, domain-specific AI applications. Each sector applies these systems differently based on its operational needs, data sensitivity, and regulatory environment.

1. Customer Service

Private LLMs improve customer service by powering secure, context-aware support systems trained on internal knowledge bases. These models handle queries, automate ticket resolution, and assist agents with accurate responses. Since data remains within controlled systems, organizations can process customer interactions without exposing sensitive information, ensuring both efficiency and confidentiality in high-volume support environments.

2. Legal

In legal environments, private LLMs support contract analysis, document review, and legal research while maintaining strict confidentiality. They can process large volumes of case files and identify relevant clauses or risks quickly. Deployment within a controlled infrastructure ensures privileged information remains protected, making it suitable for law firms and corporate legal teams handling sensitive documentation.

3. Healthcare

Healthcare organizations use private LLMs for clinical documentation, patient record summarization, and decision support. These systems operate within secure environments to protect patient data and meet regulatory requirements. By integrating with hospital systems, private LLMs enable medical professionals to obtain faster insights while maintaining the strict privacy standards essential for handling sensitive health information.

4. Pharmaceuticals

Private LLMs assist pharmaceutical companies in research analysis, drug discovery support, and regulatory documentation processing. They can summarize scientific literature, extract insights from clinical trial data, and streamline reporting workflows. Keeping these operations within private infrastructure ensures proprietary research and sensitive data remain secure while improving the speed and accuracy of research processes.

5. Enterprise Knowledge Management

Organizations use private LLMs to organize, index, and retrieve internal knowledge across departments. These systems enable employees to access relevant documents, policies, and insights quickly through natural language queries. Operating within controlled environments ensures that confidential business information is not exposed externally, while improving productivity and decision-making across teams.

6. Manufacturing Industry

In manufacturing, private LLMs support predictive maintenance, operational analysis, and process documentation. They analyze machine logs, production data, and technical manuals to provide actionable insights. Deployment within secure systems ensures sensitive operational data remains protected, while enabling teams to improve efficiency, reduce downtime, and maintain consistent production quality across facilities.

| Industry | Key Applications | Unique Requirements | Example Tasks | Compliance Considerations | Expected Outcome | Operational Challenges |

| Customer Service | Chatbots, ticket automation, and agent assistance | Real-time processing, high availability, accuracy | Ticket resolution, response suggestions, and FAQ automation | Data privacy, customer data protection | Faster response times, reduced workload, better CX | Handling peak traffic, maintaining response quality |

| Legal | Document review, contract analysis, legal research | Confidentiality, precision, and auditability | Clause extraction, risk identification, case summarization | Client confidentiality, legal data handling | Reduced review time, improved accuracy, and risk detection | Managing unstructured data, ensuring interpretability |

| Healthcare | Clinical support, record summarization | Strict privacy, regulatory compliance, and accuracy | Patient summaries, medical transcription, and decision support | HIPAA-like standards, patient data privacy | Improved care efficiency, reduced admin workload | Data standardization, minimizing diagnostic errors |

| Pharmaceuticals | Research analysis, drug discovery support | Data integrity, traceability, regulatory alignment | Literature review, trial data summarization, and reporting | Regulatory approvals, data traceability | Faster research cycles, improved documentation accuracy | Managing large datasets, ensuring model reliability |

| Enterprise Knowledge Mgmt | Internal search, knowledge retrieval, documentation | Data organization, access control, relevance | Document indexing, policy retrieval, and knowledge queries | Internal governance policies | Improved productivity, faster decision-making | Keeping data updated, avoiding outdated responses |

| Manufacturing | Predictive maintenance, process optimization | Operational accuracy, real-time data processing | Equipment diagnostics, log analysis, workflow optimization | Operational compliance standards | Reduced downtime, improved efficiency, and cost savings | Integrating legacy systems, handling real-time inputs |

Get Expert-Led Deployment Support

Don’t waste months figuring things out. Our team guides you through private LLM deployment with clear steps, hands-on support, and practical solutions that actually work.

Security and Governance Frameworks for Deploying Private LLM

1. Access controls

Access controls define who can interact with the model, data, and supporting systems. Role-based access ensures users only perform actions relevant to their responsibilities. Strong authentication mechanisms, such as multi-factor authentication, add an additional layer of protection. Properly configured access policies reduce the risk of unauthorized usage and help maintain accountability across the organization.

2. Audit logs

Audit logs provide a detailed record of system activity, including user interactions, data access, and configuration changes. These logs support transparency and enable organizations to trace actions when investigating issues or verifying compliance. Well-maintained logging systems help identify misuse, detect anomalies, and provide evidence for internal reviews or regulatory requirements.

3. Encryption standards

Encryption protects data both at rest and in transit, ensuring that sensitive information remains secure even if intercepted. Organizations should implement industry-standard encryption protocols and regularly update them to address emerging threats. Proper key management practices are also critical, ensuring that only authorized systems and users can decrypt and access protected data.

4. Monitoring tools

Monitoring tools provide continuous visibility into system performance, usage patterns, and potential security threats. Real-time alerts help teams respond quickly to unusual activity or failures. These tools also support performance optimization by identifying bottlenecks. Consistent monitoring ensures the LLM operates reliably while remaining compliant with internal policies and security standards.

Conclusion

Private LLM deployment gives organizations a controlled way to use advanced AI without exposing sensitive data or losing oversight of critical systems. It supports stronger security, tailored integrations, and long-term efficiency when aligned with the right architecture and governance. The trade-offs exist, but with proper planning, businesses can build reliable, scalable solutions that fit their operational and compliance needs.

Debut Infotech helps organizations navigate private LLM deployment with practical, end-to-end generative AI development services. Our team designs secure architectures, handles model customization, and ensures smooth integration with enterprise systems. This enables businesses to adopt AI with confidence while maintaining full control over data and performance.

FAQs

A. Enterprises should go private when data sensitivity is high, compliance rules are strict, or latency needs to stay predictable. It also makes sense when API usage costs start piling up, or customization gets deep. If control, privacy, and long-term cost stability matter, private deployment is usually the smarter move.

A. On-premise means everything runs inside your own hardware. Full control, higher setup cost. VPC keeps things in the cloud while isolating them within your private network. Hybrid mixes both. You might train or store sensitive data on-prem, then use cloud power for scaling. Each option balances control, cost, and flexibility differently.

A. Private deployments keep your data within controlled environments, which cuts exposure to third parties. That helps with regulations such as GDPR and HIPAA. You decide access rules, logging, and storage policies. It also reduces risks associated with shared infrastructure, enabling tighter control over audits, encryption, and internal governance.

A. They rely on containerization, orchestration tools such as Kubernetes, and robust MLOps pipelines. Models are versioned, monitored, and continuously updated. Teams track performance, latency, and drift. Scaling happens through distributed systems and GPU clusters, while automation handles deployment, rollback, and resource allocation without constant manual work.

A. Private LLM deployment comes with upfront costs. Think GPUs, infrastructure, setup, and engineering time. It can run into the tens or hundreds of thousands, depending on the scale. Over time, though, it often evens out or even drops below API costs, especially with heavy usage and predictable workloads.

A. Private LLM deployment keeps sensitive data locked inside your own environment. No third-party exposure, no shared infrastructure worries. You control access, encryption, and logging end-to-end. That makes audits easier and reduces risks associated with data leaks, model misuse, or external dependencies.

A. Go for private LLM deployment when data privacy is non-negotiable, compliance rules are tight, or API costs are starting to sting. It also fits cases where you need deep customization or low-latency responses. If control and predictability matter more than convenience, this route makes sense.

A. It depends on complexity, but most setups take anywhere from a few weeks to a few months. A basic deployment with pre-trained models can move fast. Custom models, integrations, and security layers stretch the timeline. Bigger teams with solid infrastructure usually get there quicker.

Our Latest Insights