Home / Blog / Artificial Intelligence

Small Language Models: Why Compact AI Delivers Fast, Efficient Intelligence

At this point, in case you have been using AI tools recently, you must have made an interesting observation: size does not necessarily matter.

That’s where small language models come in.

Small language models(sometimes referred to as SLMs) are small AI models that are designed to understand and generate human language, without being massive or expensive like large language models. They require significantly fewer parameters to run, are less expensive to maintain, and are a lot easier to install, particularly to edge-computing, mobile apps or on in-house systems where performance and information security are important.

At a glance, Small Language Models offer:

- Reduced latency and increased speed of response.

- Smaller model sizes (millions to low billions of parameters)

- Less compute and memory capacity.

- Reliable performance for focused, task-specific use cases

In simple terms, small language models trade scale for efficiency, giving you more control, better performance in constrained environments, and fewer infrastructure headaches.

How Small Language Models Actually Work

On the higher level, small language models are constructed on the same basis as numerous contemporary AI models. They use transformer architectures to identify trends in language and provide corresponding answers.

The notable difference is not in their mode of operation, but rather in the quantity that they are engineered to carry.

Rather than attempting to be broader in their scope, small language models are deliberately simplified to execute particular tasks.

What Makes Them “Small” but Efficient

Small language models are optimized in a few important ways:

- The process of parameter pruning eliminates the irrelevant components of the model allowing it to remain lightweight.

- Knowledge distillation enables a smaller model to be trained based on a larger and more complex one.

- Quantization minimizes the memory usage and maintains accuracy.

- Task-specific fine-tuning sharpens performance for a particular use case

A combination of these methods makes the model remain fast, efficient, and simpler to deploy.

Why Smaller Can Be Smarter (For the Right Tasks)

Small language models do not attempt to learn everything. And that is the reason why they are so effective.

They are made to repeat efficient tasks that are predominantly specific and fast like:

- Identifying user intent

- Understanding commands inside apps or interfaces

- Answering questions within a defined domain

- Summarizing forms, messages, or internal documents

Because they’re focused, these models often deliver:

- Faster response times

- More predictable outputs

- Better control in production environments

Practical tip: A simple visual comparing how small vs large models process the same request can help readers instantly grasp why efficiency, not size, is the real advantage here.

Turn niche data into your competitive edge.

Our custom AI Models deliver focused intelligence, fast, efficient, and built for your unique business needs.

Small Language Models vs. Large Language Models: What’s the Difference?

When comparing Small Language Models to Large Language Models, people usually pose one question:

“Which one is better?”

In reality, the better question is:

Which one is suitable for the job I am attempting to do?

How Small Language Models Think

Small Language Models are built to stay lean and focused.

They use far fewer parameters, usually in the millions to low billions which makes them:

- Faster to run

- Cheaper to maintain

- Easier to deploy

Due to their size they are suitable to:

- Mobile apps

- Edge devices

- Private or on-premise systems

This provides teams as well with greater control over AI data security, as it can be processed locally rather than being transferred to a centralized cloud.

How Large Language Models Differ

Large Language Models are designed for scale and flexibility.

They are dependent on huge numbers of parameters to:

- Manage face-to-face discussions.

- Perform complex reasoning

- Generate creative or exploratory responses

The trade-offs associated with that power are:

- Increased infrastructure expenditure.

- Slower response times

- More reliance on cloud environments.

Side-by-Side Comparison

| Feature | Small Language Models | Large Language Models |

| Model size | Millions to low billions | Tens to hundreds of billions |

| Cost | Lower and predictable | High and variable |

| Speed | Very fast | Slower |

| Deployment | On-device, edge, private cloud | Centralized cloud |

| Privacy | Stronger local control | Lower by default |

| Knowledge scope | Narrow and task-focused | Broad and general |

| Best use | Repetitive, well-defined tasks | Open-ended reasoning |

The Key Takeaway

Small language models are constructed with focus, speed and are controlled.

They are not attempting to do everything, they are attempting to do the right thing, in the most efficient way.

When you are aiming at a specific type of intelligence, as opposed to general reasoning, then smaller models can be very effective in the real world.

Real-World Examples of Small Language Models

When individuals discuss small language models, they tend to desire practical examples and not merely definitions. It is not about brand comparison but how these models are applied into real products and systems.

The following are popular illustrations that explain why smaller models are becoming popular.

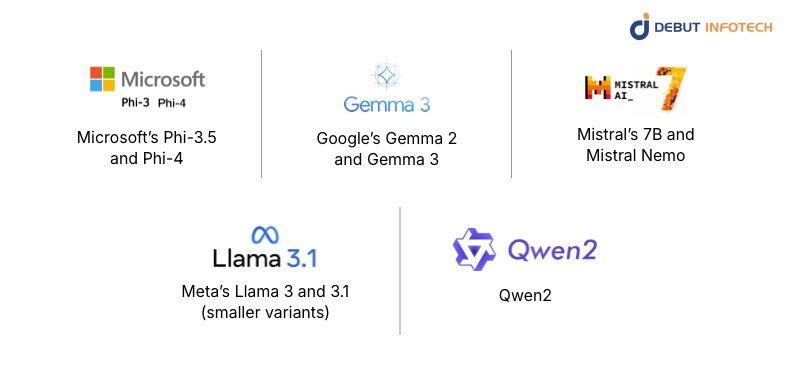

Microsoft’s Phi-3.5 and Phi-4

These models show that solid thinking does not necessarily involve huge scale. They are not as big as the other AI products, but they are optimized to do structured tasks such as problem-solving, educational tools, and controlled AI processes.

What this shows:

Small language models have the ability to provide credible reasoning when they are trained with quality data and have clear tasks.

Google’s Gemma 2 and Gemma 3

Gemma models are efficient and accessible models, allowing developers more liberty to experiment, fine-tune and deploy models without the heavy infrastructure needed by larger models.

What this shows:

Small Language Models tend to be simpler to adapt to and implement them in the real world.

Mistral’s 7B and Mistral Nemo

The models are designed with the purpose of quick inference and low latency, which makes them a suitable choice when it comes to chat interfaces, automation tools, and systems where quick and predictable answers are required.

What this shows:

Performance is not merely about size, but about responsiveness and consistency.

Meta’s Llama 3 and 3.1 (smaller variants)

The smaller Llama models focus on flexibility, enabling the teams to customize them to domain-specific solutions such as internal application, customer support, or classification of content.

What this shows:

Small language models are best suited to a specific and limited purpose.

Qwen2

Qwen2 points to the ability of smaller models to be reconfigured to multilingual use cases, as being useful in global products that require language flexibility without the enormous compute expenses.

What this shows:

Small language models are able to scale across languages even when they do not scale in size.

Read also – 10 Best Large Language Model Development Companies in the USA

Real-World Use Cases: How Small Language Models Deliver Practical Value

Small Language Models are not smaller counterparts to large AI models, but rather designed specific tools that are faster, cheaper, and more efficient to execute a certain task. They are lightweight and highly specialized and therefore come in handy in real-time, low-latency situations where fast decision-making and local processing is important.

Mostly, the best small language models perform better than larger models by being specialized in one job.

Here’s how they’re being used in the real world today:

1. Domain-Specific SLMs in Healthcare

Precision and privacy are essential in healthcare, and Small Language Models are coming in especially handy in this area.

Domain-trained SLMs may be fine-tuned with medical terminology, clinical literature, and anonymized patient records to assist with tasks such as:

- Concise summary of electronic health records (EHRs).

- Helping clinicians with diagnostic knowledge.

- Summarizing medical research in a format that is understandable.

Since these models are capable of operating in secure and privatized environments, they can enable healthcare organizations to enhance efficiency and still ensure compliance and patient confidentiality.

2. Market Trend Analysis & Smarter Product Decisions

Small Language Models assist companies by analyzing their sales data, customer behavior, and market data:

- Spot emerging trends

- Maximize marketing campaigns.

- Create products that are more customer-oriented.

Rather than simply using intuition, teams receive information that is supported by data at a small fraction of the compute cost of large AI models.

3. Customer Service Automation That Feels Human

Small Language Models are making customer support more intelligent with the ability to answer frequent questions, solve regular problems, and provide step-by-step solutions without the need to wait.

As an example, a B2B SaaS company can roll out a small model that is trained on the previous support tickets, internal documentation, and troubleshooting processes.

This allows the AI to:

- Quickly respond to frequently asked customer questions.

- Give correct policy-compatible answers.

- Increase complex problems to human agents where necessary.

The impact? Faster response times, happier customers, and support teams freed up to handle higher-value tasks.

4. Real-Time Language Translation

Small Language Models are also being used to power fast, on-device translation, enabling smoother communication in international customer support, travel, and business environments, even without constant internet access.

5. Sentiment Analysis & Customer Feedback Insights

Small Language Models are used by businesses to analyze reviews by customers, feedback on social media, and survey feedback in real time. This helps teams:

- Understand customer sentiment

- Identify problems at an early stage.

- Make changes to marketing or product strategy based on actual response.

Why This Matters

These examples show a clear shift: small language models aren’t trying to replace large models, they’re reshaping how AI is applied in focused, real-world scenarios. When the goal is speed, privacy, cost efficiency, and reliability, smaller models often deliver the smarter solution.

When Does a Small Language Model Make Sense?

Small language models work best when the problem you’re solving is clear and predictable.

Instead of trying to “do everything,” an SLM focuses on doing one specific job really well. That makes it an excellent fit to a variety of real-world applications- particularly in cases where speed, privacy, and cost containment are of importance.

You should consider a Small Language Model if:

- Your task is well-defined

The role of the AI is clear, e.g. classification, summarization or structured responses.

- Offline or edge deployment is required

The model needs to run on devices, private servers, or restricted environments.

- Data privacy is critical

Sensitive information can’t leave your system or be sent to third-party APIs.

- Cost predictability matters

You want stable infrastructure costs without surprise usage spikes.

That is why a lot of teams that work with AI development services use small language models because they provide good performance but without large general-purpose models that have excessive overhead.

When a Small Language Model May Not Be the Right Fit

Small language models have limits, and that’s not a bad thing, it’s just about choosing the right tool.

You may want to look beyond an SLM if:

- Your application requires deep reasoning.

Complex problem-solving or multi-step logic often needs larger models.

- The task is very open-ended or creative.

SLMs are not good at brainstorming, storytelling, or general wide-ranging conversations.

The Future of Small Language Models

Small Language Models (SLMs) are not a one-time trend but are already part and parcel of how AI is implemented in the real world.

As organizations are no longer experimenting but producing, what matters increasingly is not the size of a model, but its efficiency in solving the problem. This change outlines the practical benefits of SLMs.

1. Smaller Models, Smarter Deployment

Over the next few years, SLMs will be installed everywhere, not in the flashy standalone form, but as task-specific intelligence silently driving applications, services, and devices.

They will be powered by mobile apps, wearables, and edge devices and will offer fast and reliable performance without the heavy infrastructure that massive models need.

SLMs will also be used in enterprise systems to locally process sensitive data that will ensure information is both confidential and secure. Products will no longer be based on a single large model but will be based on many smaller models, each of which has been optimized to perform a particular task.

2. More Effective Training, Less Guesswork

Advances in model distillation, fine-tuning, and domain-specific training are making SLMs more capable than ever while keeping them compact

This allows for higher accuracy on focused tasks, more predictable outputs, and fewer “hallucinations” in structured workflows.

Businesses can more quickly iterate and put in place models that exactly fit their requirements without the huge compute and cost burden of large language models.

3. Privacy-First AI as a Standard

Privacy and compliance are becoming the two main trends that affect the development of AI, and SLMs align with this pattern very well.

With in-support, in-premises, or on-controlled enterprise deployments, SLMs reduce the exposure of data and assist companies in achieving regulation-related requirements, including GDPR, HIPAA, or industry-related regulations.

Privacy-sensitive AI solutions in the future will frequently tend to fall back to SLMs, instead of using bigger models in those situations that actually demand them.

4. From “Nice to Have” to Default Choice

As time goes on, Small Language Models will probably be the default starting point to AI features.

Big models will still be significant but will only be applied in cases when broad thinking, creativity, or exploration is required.

The new business model is straightforward: begin with a small scale, grow in a smart and efficient way, and streamline to speed, cost, and control. This enables companies to implement AI that is practical, efficient, and user-friendly and is not needlessly complicated.

Ready to build an AI that speaks your business language?

We design powerful AI models that drive efficiency and insight. Start the conversation.

Wrapping Up

When it comes to AI, bigger isn’t always better. Small language models, especially domain-specific ones, show just how powerful a focused, customized approach can be.

For companies building AI-driven solutions like conversational platforms or industry-specific workflows, these models aren’t just “nice to have.” They deliver better accuracy, more relevant insights, and smarter support for human expertise, things generic models often struggle to provide.

This is where Debut Infotech comes in. Being one of the top AI development companies, we assist businesses design, develop, and deploy small, efficient, domain-oriented language models that actually fulfill the requirements of their industry.

Ready to make AI work smarter for your business? Contact Debut Infotech today to explore how small language models can transform your workflows and amplify your team’s expertise.

Frequently Asked Questions (FAQs)

A. SLM are small and specialised AI systems. They share the transformer architecture with large language models (LLMs) but contain significantly fewer parameters, typically between millions and a few billion.

SLMs are precise because they are task-oriented. They are trained on high quality and narrow datasets, as opposed to attempting to learn everything.

Methods such as knowledge distillation, pruning and quantization are used to make these models smaller and more efficient, without reducing their performance on the tasks they are suited for.

A. Small Language Models (SLMs) are made to be efficient and cost-effective. They are efficient in doing particular tasks and can be operated with less powerful hardware. SLMs are quick, lightweight and best when speed and resource efficiency are considered.

Large Language Models (LLMs) instead provide more general knowledge and more sophisticated reasoning. They are flexible and can be used to perform many different functions. Nonetheless, LLMs are intensive in computing and tend to require cloud applications.

Our Latest Insights